AI Simplifies Surgical Consent Forms for Better Patient Comprehension

Reducing Complexity in Surgical Consents: The Role of AI in Patient Communication

Rahul Ramanathan MD1,2,3, Ryan J. Kelly BS1,2,3, Jeremy D. Shaw MD2,3,4, Adway Gopakumar BS1,2,3, Michael F. Shannon BS1,2,3, Christopher Gonzalez BA1,2,3, Jacob Weinberg BS1,2,3, John Bonamer MD1,2,3, Richard A. Wawrose MD1,2,3, Michael J. Spitnale MD1,2,3, Joon Y. Lee MD1,2,3, John Weddle MD2,3,5

- Department of Orthopaedic Surgery, University of Pittsburgh, Pittsburgh PA, USA ‘Pittsburgh Orthopaedic Spine Research Group (POSR), Pittsburgh PA, USA

- The Orland Bethel Family Musculoskeletal Research Center (BMRC), Pittsburgh PA, USA

- Intermountain Health, Salt Lake City UT, USA Department of Orthopaedic Surgery, Geisinger Health System, Danville, PA, USA

OPEN ACCESS

PUBLISHED: 31 December 2025

CITATION: Weinberg, J., 2025. Reducing Complexity in Surgical Consents: The Role of AI in Patient Communication. Medical Research Archives, [online] 13(12). https://doi.org/10.18103/mra.v13i12.7178

COPYRIGHT: © 2025 European Society of Medicine. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

DOI https://doi.org/10.18103/mra.v13i12.7178

ISSN 2375-1924

Abstract

Introduction: Surgical consent forms often exceed recommended reading levels, limiting patient understanding and hindering truly informed decision-making. Artificial intelligence (AI) based tools like ChatGPT offer a novel strategy to improve readability without compromising content accuracy.

Methods: Four standardized spine surgery consent forms from a tertiary academic center were simplified using ChatGPT. Readability was assessed before and after simplification using six validated metrics: Flesch-Kincaid Grade Level, Flesch Reading Ease, Coleman-Liau Index, Automated Readability Index, Gunning Fog Index, and SMOG Index. Changes were analyzed using paired t-tests. Linguistic complexity including total word count, character count, sentence count, words per sentence, and sentences per paragraph was evaluated using one-sample t-tests. Statistical significance was set at p<0.05.

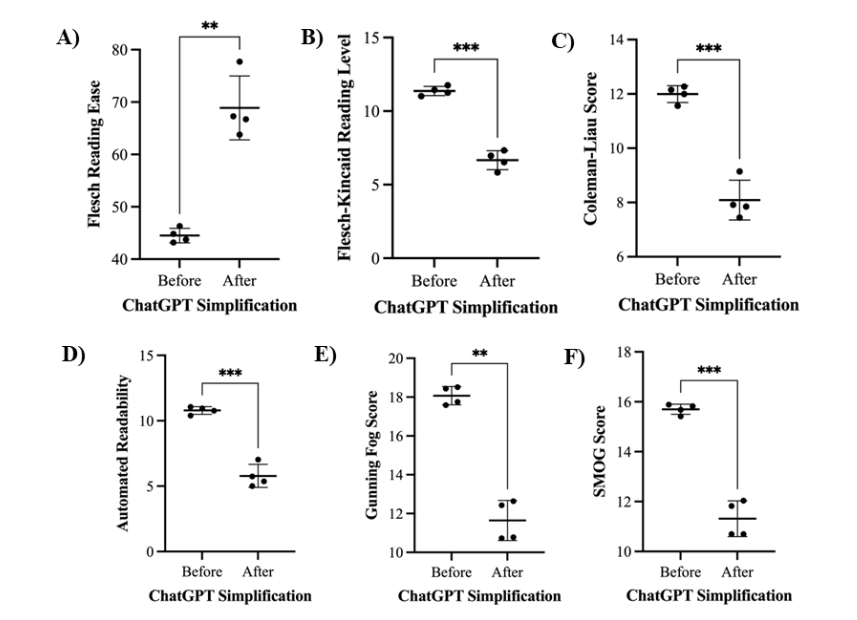

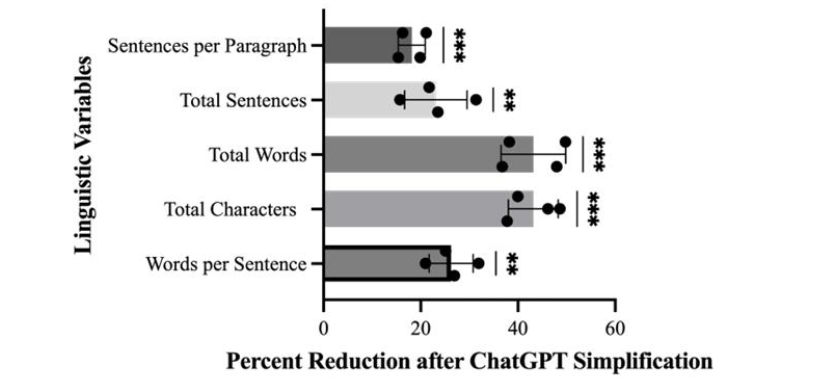

Results: ChatGPT simplification significantly improved readability across all measures. Flesch-Kincaid Grade Level decreased from 11.37 to 6.67 (p<0.001), and Flesch Reading Ease improved from 44.51 to 68.88 (p<0.005). Similar improvements were noted in Coleman-Liau (12.00 to 8.09), Automated Readability Index (10.79 to 5.79), Gunning Fog Index (18.07 to 11.65), and SMOG Index (15.70 to 11.32), all with p<0.005. Linguistic complexity was also reduced: total words (43%, p<0.001), characters (43%, p<0.001), sentences (23%, p<0.01), words per sentence (26%, p<0.001), and sentences per paragraph (18%, p<0.001).

Conclusions: ChatGPT significantly enhances the readability of spine surgery consent forms while preserving essential medical content. This AI-driven approach may improve patient comprehension and support more equitable, informed decision-making. These findings highlight the potential of AI tools to streamline clinical communication and enhance the informed consent process.

Keywords

Artificial intelligence (AI), surgical consent forms, patient comprehension, health literacy, ChatGPT

Introduction

Surgical consent forms and surgeon-patient communication are crucial aspects of the surgical informed consent process. Consent forms serve to simplify complex medical information, translating pathology and treatment plans into clear, understandable terms for patients. They facilitate informed decision-making by outlining the procedure’s risks, benefits, and specific details, ensuring patients fully comprehend their treatment. Ideally, consent forms should be written in plain language that does not require advanced medical knowledge to understand. Unfortunately, the widespread inclusion of nuanced medical terminology and detailed procedural descriptions frequently causes gaps in patients’ understanding of the procedure they will be undergoing. Recent advancements in pre- and post-operative care, surgical techniques, and recovery protocols have only compounded this issue. Collectively, these factors have led to increasingly sophisticated consent forms that are written at reading levels far above what many patients in the United States can comfortably comprehend. Although the American Medical Association and the National Institutes of Health recommend that patient-facing materials be written at approximately a 6th-grade reading level, previous studies have shown that surgical consent forms are commonly written at high-school or even collegiate reading levels. This disparity increases readability challenges, raising the risk of misinterpretation during the consent process.

To address these challenges, it is crucial to consider health literacy largely defined as individuals’ ability to use basic health information to make informed decisions about their care. Studies have consistently shown correlations between limited health literacy and lower comprehension of informed consent forms. Simplifying the language in surgical consent forms can enhance health literacy, empowering patients of all literacy levels to better understand and navigate their surgical care independently. Traditional efforts to simplify consent forms have relied on manual revisions, emphasizing the use of plain language strategies. While some of these approaches have demonstrated moderate success, results have been mixed. For example, omission of important procedural details often accompanies direct simplifications of medical jargon. Additionally, the manual process of revising these forms is time-consuming, resource-intensive, and requires substantial effort.

Large language models (LLMs) have recently gained popularity in multiple domains spanning from healthcare to education. These models use artificial intelligence (AI) trained on large language sets to analyze and modify text inputs based upon user commands. One common subset of LLMs is the Generative Pre-Trained Transformer (GPT), and the most well-known may be OpenAI’s ChatGPT. Recently, other authors have described the use of ChatGPT to simplify surgical consent forms for several operative procedures. However, the scope of investigation on this topic to date remains limited. A demonstration of broader applicability across various surgical subspecialties is needed to definitively characterize the utility of LLMs for this specific purpose. Spine surgery, whether performed by orthopaedic surgeons or neurosurgeons, often features the use of intricate instrumentation and carries a notable risk of adverse events. Given the complex nature of spine surgery, the informed consent process for spine procedures may benefit substantially from AI-based methods to improve communication and ensure adequate comprehension between the provider and patient. This study aimed to assess the ability of ChatGPT to simplify surgical consent forms for various spinal surgeries without compromising the completeness or accuracy of conveyed information. We hypothesized that the implementation of ChatGPT would reduce linguistic variables (e.g., total words, sentences, characters) while improving readability scores of surgical consent forms.

Methods

CONSENT FORM COLLECTION AND ANALYSIS

This descriptive study was conducted by a spine research group at a tertiary academic medical center. Standardized consent forms for a selection of common spine surgeries were collected from the department of orthopedic surgery. Specifically, consent forms were included for: 1) thoracolumbar decompression with or without stabilization (TLD), 2) lumbar or thoracic posterior microdiscectomy (LTPM), 3) anterior cervical discectomy with or without stabilization (ACDF), and 4) posterior cervical decompression and fusion (PCDF). These procedures were chosen as they are the most frequently performed operations at the tertiary care center at the authors’ institution. A total of eight unique consent forms were included in the analysis: four original consent forms corresponding to the previously mentioned procedure types and their corresponding AI-converted versions.

Inclusion criteria consisted of institutional, standardized consent forms used for adult patients undergoing elective spine surgery within the orthopedic surgery department. Forms had to be written in English and publicly available to patients as part of the preoperative process. Consent forms were excluded if they were incomplete, handwritten, patient-modified, non-operative in nature (e.g., for pain injections), or if they pertained to pediatric cases or procedures performed outside the scope of spine surgery.

Following collection, the widely recognized Flesch-Kincaid Grade Level and Flesch-Kincaid Reading Ease scoring systems were used to quantify the readability of the unaltered consent forms. Both systems rely on two variables: average words per sentence and average syllables per word, with syllable counts determined based on the number of vowel sounds in each word. The Flesch-Kincaid Grade Level estimates the approximate United States grade school reading comprehension level for a given text. A higher score in this metric corresponds to a higher grade level and indicates greater complexity. For instance, a score of 8.0 suggests that the text is readable by the average eighth-grade student. The Flesch-Kincaid Reading Ease score evaluates the readability of a text and reports a score on a scale ranging from 0 to 100. Higher scores indicate easier-to-read material. A score of 60 corresponds to a reading level understandable for 15- to 17-year-old students or younger, while a score of 30 demonstrates a college graduate reading level. The formulas used for both scoring systems are illustrated in Table 1.

| Readability Test | Formula |

|---|---|

| Flesch-Kincaid Grade Level (FKGL) | FKGL = (0.39 × ASL) + (11.8 × ASW) – 15.59 |

| Flesch-Kincaid Reading Ease (FRE) | FKRE = 206.835 – (1.015 × ASL) – (84.6 × ASW) |

| Gunning Fog Index (GFI) | GFI = 0.4 × (ASL + 100 × (Complex Words / Total Words)) |

| SMOG Index | SMOG = 1.0430 × SQRT (30 × (Polysyllables / Sentences)) + 3.1291 |

| Coleman-Liau Index (CLI) | CLI = (0.0588 × L) – (0.296 × S) – 15.8 |

| Automated Readability Index (ARI) | ARI = (4.71 × (Characters / Words)) + (0.5 × (Words / Sentences)) – 21.43 |

The variable key defines the components used in the formulas.

| ASL | Average Sentence Length (Words per Sentence) |

| ASW | Average Syllables per Word |

| L | Average number of letters per 100 words |

| S | Average number of sentences per 100 words |

| Complex Words | Words with three or more syllables |

| Polysyllables | Words with three or more syllables |

To further evaluate the ability of ChatGPT to simplify the surgical consents, two additional readability indices that rely on average number of syllables per word (ASW) and number of sentences were used to analyze the texts: 1) the Gunning-Fog Index, 2) the Simple Measure of Gobbledygook (SMOG) Index. The Gunning-Fog index gives a numerical score equivalent to the United States grade school reading comprehension level, with the numbers 13-16 corresponding to undergraduate levels and 17 and above being post-graduate difficulty. The SMOG index is considered to be one of the most effective tools to measure the readability of healthcare-related texts and is preferred by the CDC and other international organizations to ensure their target audiences will understand the texts. To ensure that the ASW and sentence numbers were not inducing error into the calculation, the Coleman-Liau Index and Automated Readability Index (ARI) were employed, as these tests use the average word length (AWL) as a variable in their calculation. The Coleman Liau is commonly used to evaluate textbooks to ensure they are appropriate for the grade levels in which they are intended to be used. The ARI was developed and validated for the United States Air Force to ascertain universal accessibility of their documents.

AI SIMPLIFICATION

The original consent forms were entered into ChatGPT with the prompt: Convert this document to 6th grade reading level without decreasing original word count by more than 20%. The sixth grade reading level was selected as this is approximately the median reading level within the United States. The readability indices were used to determine the scores of these newly produced documents. These modified texts were then independently reviewed by physicians with knowledge of these procedures, but who were not directly involved in the simplification process. The physicians assessed the simplified consent forms for factual accuracy and to ensure that sufficient information was present to serve its intended purpose of informed consent. They also reviewed the documents for basic grammar and syntax, as well as general readability.

STATISTICAL ANALYSES

Descriptive statistics were used to summarize readability scores across each index (Flesch-Kincaid Grade Level, Flesch-Kincaid Reading Ease, Coleman-Liau Index, Automated Readability Index, Gunning Fog Index, and SMOG Index), reported as mean ± standard deviation for each consent type and across all forms collectively. To compare pre- and post-simplification scores, paired t-tests were performed for each readability metric. A two-tailed p-value <0.05 was considered statistically significant. Individual t-test results are reported in the Results section. Additionally, one-sample t-tests were used to assess the relative percent reduction in key linguistic variables (total number of sentences, sentences per paragraph, words per sentence, total words, and total characters) following ChatGPT simplification. All analyses were conducted using Microsoft Excel.

Results

FLESCH-KINCAID GRADE LEVEL AND READING EASE SCORES

Before simplification, the Flesch-Kincaid Grade Level had a mean score of 11.37±0.31, and the Flesch-Kincaid Reading Ease had a mean score of 44.51±1.38. After ChatGPT was used to make simplifications of each consent, the mean Flesch-Kincaid Grade Level score significantly decreased to 6.67±0.65, while the mean Flesch Reading Ease score increased to 68.88±6.10 (Table 2).

| ACDF | LTPM | TLD | PCDF | Mean (SD) | |

|---|---|---|---|---|---|

| FKGL Original | 11.25 | 11.02 | 11.45 | 11.75 | 11.37 (0.31) |

| FKGL Simplified | 5.83 | 6.53 | 7.33 | 6.97 | 6.67 (0.65) |

| FKRE Original | 44.82 | 46.31 | 43.74 | 43.17 | 44.51 (1.38) |

| FKRE Simplified | 77.73 | 66.73 | 63.77 | 67.27 | 68.88 (6.10) |

| CLI Original | 12.00 | 11.56 | 12.27 | 12.15 | 12.00 (0.31) |

| CLI Simplified | 7.85 | 7.44 | 9.14 | 7.92 | 8.09 (0.73) |

| ARI Original | 10.77 | 10.39 | 11.06 | 10.95 | 10.79 (0.29) |

| ARI Simplified | 5.37 | 5.00 | 7.03 | 5.74 | 5.79 (0.88) |

| Gunning Fog Original | 18.44 | 17.75 | 18.51 | 17.59 | 18.07 (0.47) |

| Gunning Fog Simplified | 10.73 | 10.78 | 12.64 | 12.43 | 11.65 (1.03) |

| SMOG Original | 15.82 | 15.42 | 15.88 | 15.68 | 15.70 (0.20) |

| SMOG Simplified | 10.70 | 10.70 | 12.04 | 11.82 | 11.32 (0.72) |

A paired t-test demonstrated that ChatGPT simplification significantly reduced document complexity across multiple readability indices. Flesch-Kincaid Grade Level scores decreased [t(3)=17.12, p<0.001] (Figure 1A), and Flesch Reading Ease scores increased significantly, where higher values indicate greater readability [t(3)=8.143, p<0.005] (Figure 1B). Similar improvements were observed across other standard readability measures, including the Coleman-Liau Index, Automated Readability Index, Gunning Fog Index, and SMOG Index (Figure 1C-F).

COLEMAN-LIAU INDEX SCORES

Before simplification, the mean Coleman-Liau index score was 12.00±0.31. After ChatGPT simplification, the mean Coleman-Liau index score significantly decreased to 8.09±0.73 (Table 2). A paired t-test showed a significant reduction in the Coleman-Liau index score [t(3) =15.02, p<0.001] (Figure 1C).

AUTOMATED READABILITY INDEX SCORES

Before simplification, the mean Automated Readability index score was 10.79±0.29. After ChatGPT simplification, the mean Automated Readability index score significantly decreased to 5.79±0.88 (Table 2). A paired t-test showed a significant reduction in the Automated Readability index score [t(3) =15.23, p<0.001] (Figure 1D).

GUNNING FOG INDEX SCORES

Before simplification, the mean Gunning Fog index score was 18.07±0.47. After ChatGPT simplification, the mean Gunning Fog index score significantly decreased to 11.65±1.03 (Table 2). A paired t-test showed a significant reduction in the Gunning Fog index score [t(3)=11.34, p<0.005] (Figure 1E).

SMOG INDEX SCORES

Before simplification, the mean SMOG index score was 15.70±0.20. After ChatGPT simplification, the mean SMOG index score significantly decreased to 11.32±0.72 (Table 2). A paired t-test showed a significant reduction in the SMOG index score [t(3)=13.72, p<0.001] (Figure 1F).

LINGUISTIC VARIABLES

After running the consent forms through ChatGPT for simplification, a one-sample t-test showed a significant reduction in all measured linguistic variables. The mean total number of sentences decreased by 23% (p 0.01), sentences per paragraph decreased by 18% (p 0.001), and words per sentence were reduced by 26% (p 0.001). Additionally, the largest reductions were observed in mean total words (43%, p 0.001) and total characters (43%, p 0.001). Results are displayed in Figure 2.

PHYSICIAN DOCUMENT REVIEW

The consents were reviewed independently by seven physicians from the authors’ institution, who were not involved in the consent simplification process. All physicians confirmed that every simplified consent conveyed the necessary information for informed consent and contained the relevant information of the procedures, risks, and benefits. Some physicians observed grammatical inconsistencies in the documents but felt they did not affect overall effectiveness.

Discussion

The inadequate readability of surgical consent forms has been a long-lasting problem, leading to potential consequences for patients undergoing surgery without fully comprehending the information provided. Despite this early recognition by Grunder et al., the readability of consent forms remains a challenge across medical specialties, including spine surgery, with the average consent form still written at a reading level far exceeding that of the national average. Many of these forms disproportionately impact patients coming from low-income backgrounds, racial and ethnic minorities, and elderly patients, who statistically have lower health literacy. Simplifying these forms is essential to address health disparities and ensure equitable care. Given the rapid integration of artificial intelligence into clinical practice, we aimed to assess the potential of ChatGPT to improve the readability of spine surgical consent forms.

The average American reads at an estimated 8th grade level, yet most medical documents, including surgical consents, are written at a much higher reading level. In our analysis, the Flesch-Kincaid Grade Level scores for the original spine surgical consent documents corresponded to an 11th grade reading level. Their Flesch-Kincaid Reading Ease scores indicated that the documents are difficult to read and generally understandable only to individuals with a college-level reading ability.

The AI-simplified documents, rewritten at 5th-7th grade reading levels, demonstrated measurable improvements in readability while remaining medically accurate. These new scores matched the intended scores, showing the ability of ChatGPT to reduce the Flesch-Kincaid, Coleman-Liau, Automated Readability, Gunning Fog, and SMOG scores when given the appropriate prompt. Furthermore, the Reading Ease scores showed that the simplified consents could generally be understood by 15-17 year-old individuals, and at a very standard level of comprehension. We also observed a significant reduction in linguistic complexity, as measured by reductions in total number of words, sentences, characters, words per sentence, and sentences per paragraph. The improved readability and reduced complexity of surgical consent forms should enhance patient understanding, particularly for those with average reading levels, while also ensuring a reasonable time commitment for reading the forms in their entirety.

Traditional interventions to improve consent form readability, such as recording clinical encounters, incorporating additional audiovisual material, and using verbal discussion techniques such as asking the patient to teach back a procedure, have shown promise. However, such types of interventions require significant time and preparation, resources, and can lead to variability in quality. By integrating ChatGPT with human expert review, this study presents a scalable and efficient solution for institutions looking to improve patient communication as well as to reduce human labor for manual rewriting. Practicing spine surgeons reviewed and validated the AI-refined consent forms to account for any generalizable inaccuracies and ensure that no critical information was lost. This combination of AI-driven simplification and expert review aligns with broader healthcare trends toward digital transformation and innovation in patient care.

This study also provides an avenue for ensuring that informed consent is consistently placed as a cornerstone of ethical medical practice. If patients are unable to fully understand the content of the surgical consent forms, the validity of their consent becomes ethically questionable. Simplified consent forms, including those developed with AI assistance, have been shown to improve readability, patient comprehension, and satisfaction with the consent process. By helping patients better understand the nature and implications of their procedure, clearer consent materials may further strengthen the trust and collaboration between patients and physicians that is essential during complex surgical decision-making. These improvements could also support better adherence and potentially improved clinical outcomes, although evidence for these downstream effects remains limited.

Notably, this potential application of accurate AI-mediated document simplification is not limited to consent forms but rather has the potential for a broader application in healthcare. This methodology can be applied to a wide range of medical documents, from discharge instructions to patient education materials, improving health outcomes across various medical settings. Furthermore, future projects could assess AI’s application outside the surgical space, to hospital communications, through promotional materials and public websites, as well as outpatient care, chronic disease management, and preventive health where understanding instructions and recommendations is critical to long-term patient success.

This study does have some notable limitations. Firstly, readability index scores may not fully capture the other aspects of comprehension, such as cultural relevance or patient anxiety when reading consent forms. However, the observed improvement in readability, as measured by the Flesch-Kincaid scoring system, provides a promising foundation for expanding this approach in the future. We acknowledge that physicians must continue using original medical terminology and consent forms when explaining procedures; however, simplified materials can serve as a valuable tool to enhance patient understanding. Furthermore, the small sample size (n=4) reduces the generalizability of the results. Despite the small sample size, the statistically significant results suggest potential for reducing consent form complexity. Moving forward, we plan to address these limitations by evaluating patient comprehension through post-intervention surveys and interviews, and by increasing the number of surgical consent forms reviewed. We also intend to expand this work to other common orthopaedic procedures and involve additional surgical departments within our institution, which will allow us to evaluate the broader applicability of our AI approach across a larger and more diverse set of consent forms.

Conclusion

This study demonstrated that ChatGPT can improve the readability of spine surgery informed consent forms while preserving the accuracy and completeness of the information provided. Across all readability indices, including the Flesch-Kincaid Grade Level and Reading Ease, Coleman-Liau Index, Automated Readability Index, Gunning Fog Index, and SMOG Index, the simplified forms showed markedly lower complexity and were more consistent with reading levels appropriate for the general population. These improvements also reflected significant reductions in total words, sentences, characters, words per sentence, and sentences per paragraph. Independent physician review confirmed that all essential procedural details, risks, and benefits remained intact in the simplified versions.

The findings indicate that AI-assisted simplification, when combined with expert clinical oversight, offers a scalable and efficient method for improving the clarity of surgical consent materials. Enhancing readability has the potential to strengthen patient comprehension and to support more ethical and equitable delivery of surgical care. Future work should expand these methods to additional procedures and evaluate patient comprehension directly in order to further establish the clinical value of this approach.

Conflicts of Interest:

The authors have no conflicts of interest to declare.

Funding Source Disclosure:

None

Acknowledgements:

None

References:

- Sand K, Eik-Nes NL, Loge JH. Readability of Informed Consent Documents (1987-2007) for Clinical Trials: A Linguistic Analysis. J Empir Res Hum Res Ethics. 2012;7(4):67-78. doi:10.1525/jer.2012.7.4.67

- Mertz K, Burn MB, Eppler SL, Kamal RN. The Reading Level of Surgical Consent Forms in Hand Surgery. J Hand Surg Glob Online. 2019;1(3):149-153. doi:10.1016/j.jhsg.2019.04.003

- Lin GT, Mitchell MB, Hammack-Aviran C, Gao Y, Liu D, Langerman A. Content and Readability of US Procedure Consent Forms. JAMA Intern Med. 2024;184(2):214. doi:10.1001/jamainternmed.2023.6431

- Eltorai AEM, Naqvi SS, Ghanian S, et al. Readability of Invasive Procedure Consent Forms. Clin Transl Sci. 2015;8(6):830-833. doi:10.1111/cts.12364

- Foe G, Larson EL. Reading Level and Comprehension of Research Consent Forms: An Integrative Review. J Empir Res Hum Res Ethics. 2016;11(1):31-46. doi:10.1177/1556264616637483

- García-Álvarez JM, García-Sánchez A. Readability of Informed Consent Forms for Medical and Surgical Clinical Procedures: A Systematic Review. Clin Pract. 2025;15(2):26. doi:10.3390/clinpract15020026

- Institute of Medicine (US) Committee on Health Literacy. Health Literacy: A Prescription to End Confusion. (Nielsen-Bohlman L, Panzer AM, Kindig DA, eds.). National Academies Press (US); 2004. Accessed November 19, 2025. http://www.ncbi.nlm.nih.gov/books/NBK216032/

- Donovan-Kicken E, Mackert M, Guinn TD, Tollison AC, Breckinridge B, Pont SJ. Health Literacy, Self-Efficacy, and Patients’ Assessment of Medical Disclosure and Consent Documentation. Health Commun. 2012;27(6):581-590. doi:10.1080/10410236.2011.618434

- Zimmermann A, Pilarska A, Gaworska-Krzemińska A, Jankau J, Cohen MN. Written Informed Consent—Translating into Plain Language. A Pilot Study. Healthc Basel Switz. 2021;9(2):232. doi:10.3390/healthcare9020232

- Hadden KB, Prince LY, Moore TD, James LP, Holland JR, Trudeau CR. Improving readability of informed consents for research at an academic medical institution. J Clin Transl Sci. 2017;1(6):361-365. doi:10.1017/cts.2017.312

- Flory J, Emanuel E. Interventions to improve research participants’ understanding in informed consent for research: a systematic review. JAMA. 2004;292(13):1593-1601. doi:10.1001/jama.292.13.1593

- Stunkel L, Benson M, McLellan L, et al. Comprehension and informed consent: assessing the effect of a short consent form. IRB. 2010;32(4):1-9.

- Borello A, Ferrarese A, Passera R, et al. Use of a simplified consent form to facilitate patient understanding of informed consent for laparoscopic cholecystectomy. Open Med Wars Pol. 2016;11(1):564-573. doi:10.1515/med-2016-0092

- Drake BF, Brown KM, Gehlert S, et al. Development of Plain Language Supplemental Materials for the Biobank Informed Consent Process. J Cancer Educ. 2017;32(4):836-844. doi:10.1007/s13187-016-1029-y

- Mizrahi M, Kaplan G, Malkin D, Dror R, Shahaf D, Stanovsky G. State of What Art? A Call for Multi-Prompt LLM Evaluation. Trans Assoc Comput Linguist. 2024;12:933-949. doi:10.1162/tacl_a_00681

- Gill B, Bonamer J, Kuechly H, et al. ChatGPT is a promising tool to increase readability of orthopedic research consents. J Orthop Trauma Rehabil. 2024;31(2):148-152. doi:10.1177/22104917231208212

- Ali R, Connolly ID, Tang OY, et al. Bridging the literacy gap for surgical consents: an AI-human expert collaborative approach. NPJ Digit Med. 2024;7(1):63. doi:10.1038/s41746-024-01039-2

- Bothun LS, Feeder SE, Poland GA. Readability of Participant Informed Consent Forms and Informational Documents. Mayo Clin Proc. 2021;96(8):2095-2101. doi:10.1016/j.mayocp.2021.05.025

- Paasche-Orlow MK, Taylor HA, Brancati FL. Readability Standards for Informed-Consent Forms as Compared with Actual Readability. N Engl J Med. 2003;348(8):721-726. doi:10.1056/NEJMsa021212

- Coleman M, Liau TL. A computer readability formula designed for machine scoring. J Appl Psychol. 1975;60(2):283-284. doi:10.1037/h0076540

- Gunning R. The Technique of Clear Writing. McGraw-Hill; 1952.

- Kincaid JP, Fishburne Jr, Robert P. R, Richard L. C, Brad S. Derivation of New Readability Formulas (Automated Readability Index, Fog Count and Flesch Reading Ease Formula) for Navy Enlisted Personnel: Defense Technical Information Center; 1975. doi:10.21236/ADA006655

- McLaughlin GH. SMOG Grading: A New Readability Formula. J Read. 1969;12(8):639-646.

- Smith EA, Senter RJ. Automated readability index. AMRL-TR Aerosp Med Res Lab US. Published online May 1967:1-14.

- Fitzsimmons P, Michael B, Hulley J, Scott G. A readability assessment of online Parkinson’s disease information. J R Coll Physicians Edinb. 2010;40(4):292-296. doi:10.4997/JRCPE.2010.401

- Grundner TM. On the Readability of Surgical Consent Forms. N Engl J Med. 1980;302(16):900-902. doi:10.1056/NEJM198004173021606

- Eltorai A, Ghanian S, Adams C, Born C, Daniels A. Readability of Patient Education Materials on the American Association for Surgery of Trauma Website. Arch Trauma Res. 2014;3(1). doi:10.5812/atr.18161

- Gordon EJ, Bergeron A, McNatt G, Friedewald J, Abecassis MM, Wolf MS. Are informed consent forms for organ transplantation and donation too difficult to read? Clin Transplant. 2012;26(2):275-283. doi:10.1111/j.1399-0012.2011.01480.x

- Hannabass K, Lee J. Readability Analysis of Otolaryngology Consent Documents on the iMed Consent Platform. Mil Med. 2023;188(3-4):780-785. doi:10.1093/milmed/usab484

- Fleary SA, Ettienne R. Social Disparities in Health Literacy in the United States. HLRP Health Lit Res Pract. 2019;3(1). doi:10.3928/24748307-20190131-01

- Schillinger D. Social Determinants, Health Literacy, and Disparities: Intersections and Controversies. Health Lit Res Pract. 2021;5(3):e234-e243. doi:10.3928/24748307-20210712-01

- Glaser J, Nouri S, Fernandez A, et al. Interventions to Improve Patient Comprehension in Informed Consent for Medical and Surgical Procedures: An Updated Systematic Review. Med Decis Making. 2020;40(2):119-143. doi:10.1177/0272989X19896348

- Feinberg IZ, Gajra A, Hetherington L, McCarthy KS. Simplifying informed consent as a universal precaution. Sci Rep. 2024;14(1):13195. doi:10.1038/s41598-024-64139-9

- Coyne CA, Xu R, Raich P, et al. Randomized, controlled trial of an easy-to-read informed consent statement for clinical trial participation: a study of the Eastern Cooperative Oncology Group. J Clin Oncol Off J Am Soc Clin Oncol. 2003;21(5):836-842. doi:10.1200/JCO.2003.07.022

- Tait AR, Voepel-Lewis T, Malviya S, Philipson SJ. Improving the Readability and Processability of a Pediatric Informed Consent Document: Effects on Parents’ Understanding. Arch Pediatr Adolesc Med. 2005;159(4):347. doi:10.1001/archpedi.159.4.347

- Hansberry DR, Agarwal N, Shah R, et al. Analysis of the readability of patient education materials from surgical subspecialties: Readability of Patient Education Material. The Laryngoscope. 2014;124(2):405-412. doi:10.1002/lary.24261

- Ammanuel SG, Edwards CS, Alhadi R, Hervey-Jumper SL. Readability of Online Neuro-Oncology Related Patient Education Materials from Tertiary-Care Academic Centers. World Neurosurg. 2020;134:e1108-e1114. doi:10.1016/j.wneu.2019.11.109

- Rivera Perla KM, Tang OY, Durfey SNM, et al. Predicting access to postoperative treatment after glioblastoma resection: an analysis of neighborhood-level disadvantage using the Area Deprivation Index (ADI). J Neurooncol. 2022;158(3):349-357. doi:10.1007/s11060-022-04020-9