Bridging Global Health AI Divide with Local Wisdom

The Algorithm and the Village: Bridging the Global Health AI Divide with Local Wisdom

Sajda Qureshi, Ph.D1*; Ali Khan, MD, MPH, MBA2

- Distinguished Visiting Professor in the Faculty of College of Business & Economics, University of Johannesburg, D.B. and Paula Varner Professor of Information Systems, Director ITD Cloud Computing Lab, Department of Information Systems & Quantitative Analysis, College of Information Science & Technology. University of Nebraska at Omaha, 6001 Dodge Street, Omaha, NE 68182-0116

- Richard Holland Presidential Chair, Dean, University of Nebraska Medical Center College of Public Health, Assistant Surgeon General, U.S. Public Health Service (retired), 42nd and Emile, Omaha, Nebraska 68198

OPEN ACCESS

PUBLISHED 31 August 2025

CITATION Qureshi, S., Khan, A., et al., 2025. The Algorithm and the Village: Bridging the Global Health AI Divide with Local Wisdom. Medical Research Archives, [online] 13(8). https://doi.org/10.18103/mra.v13i8.6853

COPYRIGHT © 2025 European Society of Medicine. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

DOI https://doi.org/10.18103/mra.v13i8.6853

ISSN 2375-1924

ABSTRACT

Local use of artificial intelligence offers opportunities for improved global health outcomes. Global health ecosystems comprising mobile applications include generative Artificial Intelligence (AI) capabilities that support public health in low and middle-income communities around the world. However, challenges remain in the datasets, training and use of the Large Language Models used to support public health interventions. Following a review of the use of artificial intelligence in global health, this paper addresses some of the most difficult challenges of implementing generative artificial intelligence in global health, that of algorithmic bias, and offers a model of collective governance that involves local participation in the creation of the data sets and training to ensure algorithmic accountability. This conceptualization of global health involves access to resource networks through which health diagnosis, interventions and treatments may be carried out at any place at any time. It offers a policy framework to ensure that AI serves as a catalyst for equitable and sustainable development in public health and healthcare to address the existing disparities which in a principled framework for its design, governance, and implementation is essential for global public health. This framework emphasizes local ownership, human-centricity, and a proactive approach to ethical considerations, moving beyond a model where AI is genuinely designed, governed, and implemented by the low- and middle-income communities globally, with a specific focus on health outcomes. The contribution of this policy paper is in a novel approach to public health that involves the co-creation and collective responsibility for governance in global health systems.

Keywords

- Artificial Intelligence

- Global Health

- Algorithmic Bias

- Public Health

- Collective Governance

INTRODUCTION

This policy paper addresses a very important challenge in understanding the role of public health as it relates to all health services. The classic definition of public health is “the science and art of preventing disease, prolonging life, and promoting health through the organized efforts and informed choices of society, organizations, public and private, communities, and individuals” (Winslow 1920). This definition captures the science and art of public health, the focus on prevention, and the collective effort for population health. It also differentiated public health from healthcare – the care of the individual. However, over the last century, this distinction has blurred as healthcare has become better understood as the services and systems that address individual health and public health have become engaged in assessing and improving those services and systems. This reframes healthcare, not unlike environmental health, as a subset of public health. Global health refers to health issues that transcend national borders and require global cooperation to ensure health equity for all people. Global health is seen as the health of all countries interlinked and focused on equity or at least the maximum health for all citizens within their national context. Unfortunately, the practice of global health is often a north-south divide with foreign development assistance for LMICs based on funder priorities. Notions of global health vary from the lofty goals of improving health and achieving health equity for all people worldwide to addressing health inequities in low SES communities such as in mortality rates by creating access through funding (Beaglehole and Bonita 2010, Howitt et al 2012). Lakoff (2010) simplistically and cynically identifies two regimes of global health: global health security and humanitarian biomedicine. While the current global health framework focuses on health system strengthening and vertical programs (HIV, polio, ebola response), more value can be extracted from existing investments by linking these with so called horizontal (primary care networks, universal healthcare efforts), diagonal (HIV linked to strengthening health system) and one health approaches. A more powerful framework offered here is based on objectives: National security concerns, humanitarian assistance, economic and commercial interests, foreign policy interests, poverty reduction and sustainable development, and global public goods.

The digitalization of global health through AI and Machine Learning (ML) tools has taken place largely through Global Health Security which focuses on infectious These containment efforts use a combination of predictive analytics for tracking, tracing and forecasting the spread of infections that are seen to threaten high income countries, and which typically (though not always) emanate from Asia, sub-Saharan Africa, or Latin America. Humanitarian biomedicine, in contrast, targets diseases that currently afflict the poorer nations of the world, such as malaria, tuberculosis, and HIV/AIDS (Howitt et al 2012, Fletcher et al 2021). With dramatic cuts to global funding, countries and in particular, low resource communities need more creative solutions. AI appears to be one of those health technology tools that may improve the health of people in low- and middle-income communities worldwide. Some suggest that AI is integral to the future of global health as these tools can be used as part of existing health infrastructures to support clinical interventions through the use of generative AI tools as well as prediction, tracking and tracing of disease (Schwalbe and Brian Wahl 2020, Hadley et al 2020, Howitt et al 2012) while mitigating the harmful effects of AI/ML bias in global health (Fletcher et al 2021).

A plethora of AI powered tools are gaining traction in global health. This transformation of global public health through many forms of artificial intelligence systems has meant that there is significant investment and commercialization of AI globally where its use is becoming ubiquitous in healthcare from documentation support, improving efficiency, and clinical decision tools. There is a sense that adoption of AI to support health interventions in low resources communities can lead to improved health outcomes while reducing the workloads of health workers (Schwalbe & Wahl 2020, Wahl et al 2018). In conceptualizing the future of global health, this paper contends that artificial intelligence (AI) offers a significant opportunity to advance global public health, particularly by enabling “leapfrogging” in low and middle-income communities, analogous to the transformative impact of mobile technology in sectors like banking. The core argument is that AI should be conceptualized and deployed as a potent tool to enhance, rather than replace, human expertise analogous to a stethoscope. Improving the efficiency of the existing limited health infrastructure and by making clinicians and public health professionals more effective, AI can streamline processes such as initial patient triage, allowing for more efficient allocation of limited human resources. Inequities in care can be addressed in complex cases requiring empathy, nuanced clinical judgment, and the profound patient-clinician connection that defines quality care. In the policy framework offered here, AI serves as a force multiplier, guiding individuals to appropriate medical attention and empowering health systems, especially in resource-constrained settings, to achieve accelerated progress in health outcomes.

At the center of the digitalization of global health through AI lies the algorithm. It is the basis upon which healthcare applications are created and it relies on vast amounts of data created globally to offer specific interventions. Left to scour the web, greedy AI models feed on the vast datasets produced by people in what becomes the global village. The challenge is to ensure that the data fed into the AI engines is accurate, clean and represents the populations the tools are intended to serve. In order to be effective bridging the global health AI divide will require local participants to help train the AI models with local wisdom.

The purpose of this paper is to address some of the most difficult challenges of implementing generative artificial intelligence in global health, that of algorithmic bias. In doing so it offers a model of collective governance that involves local participation in the creation of the data sets and training to ensure algorithmic accountability. The question that is answered in this paper is: how can artificial intelligence tools be used to support global health to ensure equitable outcomes? The contribution of this policy paper is in a novel approach to public health that involves the co-creation and collective responsibility for governance in global health systems. The following section offers a methodology, an overview of the role of AI in global health, then the future of global health is discussed as AI transforms the way in which healthcare is delivered. Due to the biases accentuated by the machine learning algorithms, inequities in healthcare delivery exacerbate global health outcomes. Following an explanation of algorithmic bias and strategies to mitigate these, this paper offers a policy framework containing laws of responsible AI for bridging the global health AI divide with local wisdom.

METHODOLOGY

An interpretive inductive research approach is followed for the conceptualization and creation of the policy framework offered in this paper. This is an accepted approach for developing policy. According to Browne (2019) such interpretive approaches examine the framing and representation of problems and how policies reflect the social construction of the challenges identified. In this way the analysis offered in this paper can offer a means to apply AI tools while informing practitioners in the use of the AI techniques. The design of this study begins by reviewing the literature on the role of artificial intelligence in global health to offer a theoretical lens through which the phenomenon can be conceptualized. In order to understand how AI can improve global health outcomes, small case studies or vignettes are used to support context-specific effects in policy adaptation, evolution and implementation (Walt et al 2008). The resulting conceptual lens helps address algorithmic bias and is the basis for the policy framework which ensures that AI serves as a catalyst for equitable and sustainable development in public global health to address inequities in healthcare.

ROLE OF ARTIFICIAL INTELLIGENCE IN GLOBAL HEALTH

AI is integral to the future of global health (Schwalbe and Wahl, 2020). AI-driven health interventions enable diagnosis using machine learning; natural language processing and signal processing methods are often used together with machine learning to automate the diagnosis of communicable diseases. Most diagnostic interventions using AI in LMICs reported either high sensitivity, specificity, or high accuracy (>85% for all), or non-inferiority to comparator diagnostic tools. Machine learning aids clinicians in diagnosing tuberculosis and expert systems are used for diagnosing tuberculosis and malaria (Kuok et al 2019, Elveren et al 2011, Osamore et al 2014). Morbidity and mortality risk assessment is another area for which AI driven interventions have been assessed in the global health context. These interventions are based largely on machine learning classification tools and typically compare multiple machine learning approaches with the aim of identifying the optimal approach to characterize risk. Disease prediction and surveillance is the use of machine learning and data mining, together with data from online social media networks and search engines. One study used this approach to predict dengue outbreaks and other studies to track and predict influenza outbreaks. All studies reported high accuracy compared with observed data. Social media data and machine learning using artificial neural networks were also used to improve surveillance of HIV in China. AI-driven health interventions can also be used to support programme policy and planning. One such study used data from a health facility in Brazil and an agent-based simulation model to compare programme options aimed at increasing the overall efficiency of the health workforce (Schwalbe and Wahl 2020, Andrade et al 2010).

Generative Artificial Intelligence (AI) in the form of Large Language Models (LLMs) such as Chat GPT-4, BERT, Claude etc., are becoming powerful tools with significant applications in healthcare. Generative AI is transforming multiple industries including health care by processing and generating complex data including images (Mesko & Topol, 2023). has demonstrated utility in handling clinical documentation, streaming electronic health records and facilitating human-like patient communication (Xue et al, 2023) thus improving patient visits. Generative AI is capable of assisting in diagnosing diseases, suggesting treatment plans, and managing health records efficiently. Generative AI can provide insights to health care providers by processing vast amounts of medical data, which can enhance health care and operational efficiency. However, the integration of generative AI in healthcare does not come without challenges. Transparency and accountability are the major concerns due to the “black box” nature of AI systems which are critical in medical settings as the Generative AI decisions significantly impact patient lives (Shin & Park 2019). Ethical considerations, such as privacy, consent, and the potential amplification of existing biases, must be meticulously managed (Zhang et al., 2023). Regulatory frameworks need to evolve to keep pace with technological advancements to ensure that generative AI tools are safe, reliable, and fair (Zhang et al., 2023). Though Generative AI has some challenges, the potential benefits of generative AI in healthcare are huge and can lead to earlier and more accurate diagnoses, improved health outcomes, all while potentially reducing costs. Establishing robust regulations will be crucial in maintaining trust in AI-assisted healthcare services and ensuring that these technologies enhance rather than compromise patient care to fully realize these benefits (Zhang et al., 2023); ensuring they complement the skills of medical professionals and adhere to strict standards of safety and efficacy. As these technologies evolve, continuous collaboration between AI developers, healthcare professionals, and regulatory bodies will be crucial to address the ethical implications and integrate these tools responsibly into healthcare practice. With the rise of AI applications in many aspects of social life, the risks are exacerbated increasing the inequities that were present without the digital technologies that are used.

There is an important role for AI to play in addressing social determinants of health. In their analysis of data from the World Health Organization, Qureshi et al (2025) found that a unit increase in the Human Capital Index (HCI) of a country adds about 0.79 to the AI index of that country; conversely, a unit increase in the lifestyle factors of a given country reduces the AI index of such country by 0.08. The model adequacy, measured by its r-squared, confirmed that all four socio-determinants of health (Reproductive and Maternal health, Education, Lifestyle and Geo-Economic Factors) were able to explain up to 65.8% of the variation in AI index. Hence, the result from this analysis suggests that HCI and lifestyle factors of a country have a huge role to play in explaining its AI characteristics. The role of AI in mitigating syndemics is important. Syndemic can be described as the clustering of multiple 2 or more disease conditions within a specific population; a clustering process which is contributing to and resulting from continuing social and economic inequalities among this populace (Mendenhall et al., 2017). In theory, three concepts are needed to formulate the idea of syndemic: i) disease concentration, ii) disease interaction, iii) large-scale social forces from which the diseases emanate (Tsai et al., 2017). In practical terms, syndemic is applicable in the study of adverse interaction between diseases and health conditions of all kinds that can be traced to health inequality ranging from infectious diseases to non-communicable diseases to mental health and behavioral conditions to malnutrition and lots more (Singer et al., 2017). Some researchers have also established syndemics as an approach that can be used to investigate synergistic and harmful interactions that exist among co-occurring health conditions that tend to escalate under poor structural, social and political conditions (Singer et al., 2017). Qureshi and Oladokun (2025) found a significant connection between syndemics measured in a set of independent variables, also had a significant relationship with AI index. More specifically, having a significant interaction effect with all three SDOH variables (government health expenditure, human capital index and AI index) and multiple independent variables suggests the moderating roles each of the SDOH plays on the relationship between substance abuse, mental health disorders and IDI.

THE FUTURE OF GLOBAL HEALTH

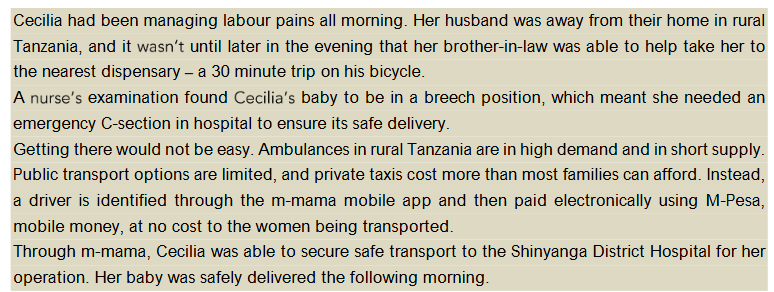

For Artificial intelligence and machine learning tools to be used effectively in healthcare interventions, people need to know how to use these tools and when they cannot be used. This means that human digital development is needed to address global health challenges. Digital spaces offer expanded economic and social opportunities to exercise human agency where digital health is the use of these technologies to achieve improved health outcomes. The term human digital development refers to the exercise of human agency using ICTs, in particular, human interactions on cyberspace to offer new ways in which people may lead the lives they choose to live (Qureshi 2022). Successful uses of AI will require both commercial and guardian modes of governance to interact (Jacobs 1994). This means that at the heart of digitally intelligent approaches to global health, will require people to use AI as part of their economic exchanges of healthcare goods and services. To keep the promise of helping people access the socio-economic resources they need to stay healthy, the guardian offers services through public funds. In global health, generative AI systems could go beyond the capabilities of standard conversational AI by not only engaging in interactions but also creating the content of their responses, offering more comprehensive, real-time interactions. The following example illustrates how tech is connecting mothers with critical health services in Africa:

Cecilia had been managing labour pains all morning. Her husband was away from their home in rural Tanzania, and it until later in the evening that her brother-in-law was able to help take her to the nearest dispensary a 30 minute trip on his bicycle. A examination found baby to be in a breech position, which meant she needed an emergency C-section in hospital to ensure its safe delivery. Getting there would not be easy. Ambulances in rural Tanzania are in high demand and in short supply. Public transport options are limited, and private taxis cost more than most families can afford. Instead, a driver is identified through the m-mama mobile app and then paid electronically using M-Pesa, mobile money, at no cost to the women being transported. Through m-mama, Cecilia was able to secure safe transport to the Shinyanga District Hospital for her operation. Her baby was safely delivered the following morning.

This is an example of the convergence of mobile phone technology with artificial intelligence to offer life-saving services in under-served areas of the world. In communities where access to care is limited, generative artificial intelligence to assist with diagnosis, remedies and access to treatments is growing in southern and eastern Africa. AI technology is also set to deeply influence how content is produced and created. Key developments like ChatGPT and DALL-E have been instrumental in the rising prominence of generative AI in the 21st century. ChatGPT, a generative AI-powered chatbot, crafts responses that mimic human speech based on its training. In a similar vein, DALL-E uses textual prompts to create lifelike images, showcasing another facet of generative AI. These innovations are reshaping our understanding of content creation. (Mannuru et.al.,2023). Recent advances in AI techniques and Machine Learning algorithms have rewarded retailers using digital assistants with higher customer service satisfaction. Healthcare applications in precision medicine and drug discovery are using ML approaches as a powerful and efficient way to use large amounts of data generated from genomic data and modern drug discovery to model small molecule drugs, gene biomarkers, and identify novel drug targets for various diseases (Qureshi 2022).

With the advent of generative Artificial Intelligence (AI), the integration of AI into various systems across industries is underway. Machine Learning (ML) is a well-known application of AI that is being employed to understand large data sets that comprise a variety of data from various data sources in various data types. AI technology in mHealth has to deal with clinical data, prescription data, MRI scans, CT images, insurance data, and laboratory data. Doctors are using simple ML methods for generating alerts in the identification of heart failure using an AI based mHealth application for avoiding heart failures in patients. The application of Naïve Bayes classifier in the methodology to identify heart failure has reduced the false alerts from 28.64 to 7.8 per patient (Larbubu et al, 2018). This system has made it easier to predict possible risk of heart failure among the patients that are connected through the alert system on the mHealth application. Vandelanotte et al. (2023) propose a novel approach to mHealth interventions using machine learning for real-time personalization. Their study highlights the integration of various data sources and the use of a likable digital assistant, demonstrating the potential of AI in health behavior change. Han et al. (2022) introduces a novel method to extract SDOH information from electronic health records using deep learning-based NLP. This approach emphasizes the importance of identifying a comprehensive set of SDOH in clinical practice, underscoring the potential of advanced analytics in healthcare. Apart from the above-mentioned studies on AI in mHealth applications, there are few more studies such as Sangers et al. (2022) study on skin cancer risk assessments using mHealth consumer apps that are integrated with deep learning and Xu et al. (2023) study on precision medicine points out that AI/ML has rapidly evolved precision medicine through designing, analyzing treatment, and prevention strategies to a unique characteristics. All these studies give confidence to successfully integrate AI into mHealth applications.

ALGORITHMIC BIAS IN ARTIFICIAL INTELLIGENCE

Despite the promise of generative AL, machine learning algorithms accentuate bias. Algorithmic bias is a type of bias that is introduced by the algorithms themselves due to the way data is processed or the specific models used (Norori et al., 2021). These algorithms might amplify existing inequalities by perpetuating or even exacerbating biases present in the training data. Finally, data gap bias arises when important data is missing altogether, which can skew results in the AI predictions and lead to misdiagnoses or inappropriate treatment plans. This kind of bias is particularly dangerous as it can lead to systematic neglect of certain groups within the healthcare system, such as women, the elderly, or those from lower socio-economic backgrounds, who are often underrepresented in clinical trials and other medical research that feeds AI development (Qureshi and Oladokun 2025).

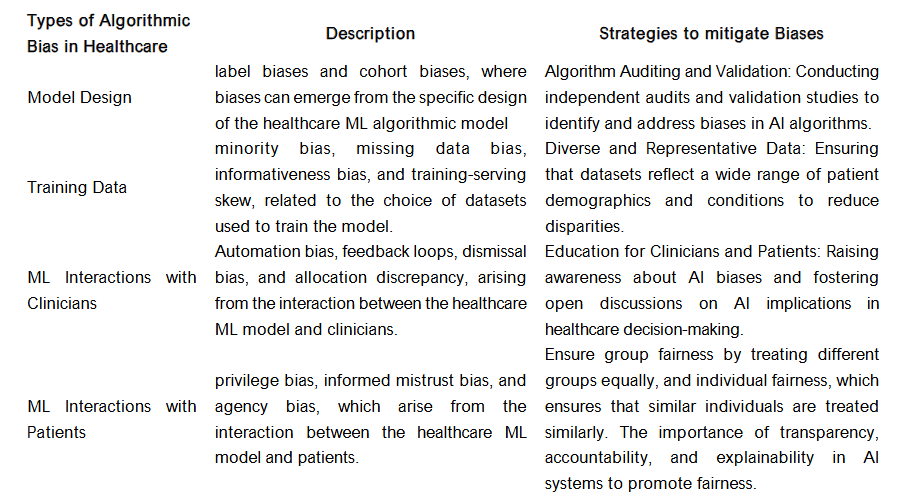

According to Giovanola and Tiribelli (2023), fairness in healthcare machine-learning algorithms (HMLA) has mostly been defined as the achievement of a state of absence of biases, often equating fairness with non-discrimination. This interpretation focuses on the removal of biases in HMLA, which is considered essential for preventing algorithmic discrimination and ensuring fairness in healthcare ML systems. The use of ML algorithms in healthcare has led to controversial effects, such as inappropriate categorization of patients based on race, leading to inequalities in care. These flaws in HMLA translate into unequal access to health resources and support. There are challenges in detecting flaws as the proprietary nature of ML algorithms in healthcare and their complex architecture make it difficult to ensure transparency and to audit and correct flaws such as biases and technical inaccuracies. Additional biases include but are not limited to healthcare ML model design. This includes label biases and cohort biases, where biases can emerge from the specific design of the healthcare ML algorithmic model. Biases in healthcare ML training data includes minority bias, missing data bias, informativeness bias, and training-serving skew, related to the choice of datasets used to train the model. There are also biases in healthcare produced by ML interactions with clinicians which includes automation bias, feedback loops, dismissal bias, and allocation discrepancy, arising from the interaction between the healthcare ML model and clinicians. Biases are also produced in ML Interactions with patients which includes privilege bias, informed mistrust bias, and agency bias, which arise from the interaction between the healthcare ML model and patients (Giovanola and Tiribelli 2023, Alderman, et al., (2025).

Strategies to address algorithmic biases include but are not limited to: 1) ensuring diverse and representative data through datasets that reflect a wide range of patient demographics and conditions to reduce disparities, 2) carrying out algorithm auditing and validation by conducting independent audits and validation studies to identify and address biases in AI algorithms, and 3) education for clinicians and patients through raising awareness about AI biases and fostering open discussions on AI implications in healthcare decision-making (Ueda et al 2024). This is where local and indigenous knowledge may support algorithmic fairness. Ferrara (2023) note that group fairness is an important strategy for addressing bias in artificial intelligence. Group fairness is concerned with ensuring that AI systems are fair to different groups of people, such as people of different genders, races, or ethnicities. It aims to prevent AI systems from systematically discriminating against any group. This can be achieved through various techniques, such as re-sampling, pre-processing, or post-processing of the data used to train the AI model. (Ferrara 2023, de Laat, 2018, Shin & Park 2019).

Subtypes of group fairness include demographic parity by ensuring that positive and negative outcomes are distributed equally across different demographic groups. Disparate mistreatment is defined in terms of misclassification rates, ensuring that errors are distributed equally across groups. Equal opportunity can ensure that the true positive rate (sensitivity) and false positive rate (1-specificity) are equal across different demographic groups (Ferrara 2023, de Laat, 2018, Shin & Park 2019).

Artificial intelligence models tend to have difficulty in representing human behavior. Yet they carry out tasks that can be carried out by trained professionals. For example, radiologists who are trained to screen X-rays are being replaced by AI engines that can detect cancer at rates that are more accurate than the human radiologists they replace. A predictive mathematical model is as seductive in its elegance as it is dangerous when powering an artificial intelligence application. Despite the exponential growth and precision of machine learning algorithms over the past thirty years, one thing remains the same: little is known about how the models arrive at their predictions. No matter how accurate the answers, the decision-making processes used by the machine learning algorithms remain elusive (Qureshi, 2023). The decision making of the AI solutions have a need to provide accountability and transparency in their decision making. Lepri et al (2018) propose the use of Open-source Algorithms to ensure fairness and transparency.

POLICY FRAMEWORK: LAWS OF RESPONSIBLE ARTIFICIAL INTELLIGENCE

The rapid global advancement of Artificial Intelligence (AI) presents both unprecedented opportunities and significant risks, particularly for Low and Middle-Income Countries (LMICs). To ensure that AI serves as a catalyst for equitable and sustainable development in public health and healthcare, rather than exacerbating existing disparities or introducing new harms, a principled framework for its design, governance, and implementation is essential. This framework emphasizes local ownership, human-centricity, and a proactive approach to ethical considerations, moving beyond a model where AI is simply developed for LMICs to one where it is genuinely designed, governed, and implemented by them, with a specific focus on health outcomes.

HUMAN-CENTRICITY AND SOCIETAL WELL-BEING IN HEALTH

At the core of any responsible AI initiative in LMICs must be a commitment to human well-being and the advancement of societal good, particularly within the health sector. This principle dictates that AI systems should always augment the capabilities of healthcare professionals, support patient-centered decision-making, and be designed with meaningful human oversight. The “human in the loop” whether a clinician, public health worker, or community member is not merely a technical safeguard but a philosophical imperative, ensuring that AI remains a tool serving community health needs, rather than an autonomous decision-maker. AI applications should directly contribute to health-related Sustainable Development Goals (SDGs) including respecting local cultures, traditional health practices, and privacy norms, and ensuring that AI solutions are perceived as beneficial and trustworthy by the communities they serve. For instance, the maternal health chatbot referenced earlier exemplifies this principle by providing accessible, gestational-specific information, offering reminders for vaccinations or vitamins, and acting as a first point of contact, thereby supporting pregnant individuals and triaging low-complexity queries to allow human healthcare providers to focus on more complex cases.

LOCAL OWNERSHIP AND CONTEXTUAL RELEVANCE

AI solutions for public health and healthcare in LMICs must be designed by LMICs, not merely for them. This principle underscores the critical importance of local leadership, health expertise, and data in every stage of the AI lifecycle. AI models should be trained predominantly on local data, reflecting the unique demographics, languages, cultural nuances, traditional health beliefs, and epidemiological patterns of the target populations. This ensures the relevance and accuracy of AI outputs, fostering local innovation that addresses specific, context-bound health challenges. For example, a chatbot for maternal health must communicate in multiple local languages, understand location-specific health services, and be sensitive to ethnic, gender, and age-related cultural norms regarding pregnancy, childbirth, and childcare. This approach contrasts sharply with models where AI tools developed in wealthy countries are simply parachuted into LMICs, often leading to poor clinical fit, limited adoption, and unintended consequences due to a lack of understanding of local health systems and cultural contexts.

SAFETY, ROBUSTNESS, AND RELIABILITY

Given often resource-constrained environments and the critical nature of AI applications in healthcare, AI systems must be inherently safe, robust, and reliable. This means designing AI that performs consistently and predictably, even with imperfect data inputs, in low-resource settings, or under challenging operational conditions (e.g., intermittent internet connectivity, limited computational power, unreliable electricity). Systems should be resilient to unexpected inputs and potential failures, with clear fallback mechanisms, including offline capabilities where necessary. For instance, an AI diagnostic tool used in a rural clinic for tuberculosis detection or malaria diagnosis must provide consistent and accurate results, even if the image quality (e.g., X-ray or portable ultrasound) is suboptimal or the internet connection is unstable, and should clearly indicate when it cannot make a reliable assessment, deferring to human clinical expertise. The “do no harm” principle is paramount, especially when AI is used for diagnosis, treatment recommendations, or public health interventions.

TRANSPARENCY AND EXPLAINABILITY FOR HEALTH STAKEHOLDERS

To build trust among patients, healthcare providers, and policymakers, and to enable effective clinical and public health oversight, AI systems should be as transparent and explainable as possible, moving away from “black box” approaches where decisions are inscrutable. This principle requires clarity on how AI tools are trained (e.g., what medical datasets were used), what data sources are leveraged for real-time analysis (e.g., syndromic surveillance data), and the logic behind their health-related decisions or predictions. For generative AI used in health information or decision support, specific policy questions arise: What types of clinical or public health decisions are they permitted to support autonomously? What are the inherent limitations and potential for hallucination of medical information? What questions should healthcare providers, patients, and public health officials be asking to ensure responsible use and validate outputs? The decision-making process of an AI tool must be interrogable and understood, especially when it impacts patient care, public health interventions, or individual health rights. This allows for continuous learning, clinical auditing, and correction, fostering a more accountable AI ecosystem.

EQUITY, NON-DISCRIMINATION, AND BIAS MITIGATION IN HEALTH DATA

AI systems in public health and healthcare must be designed to be equitable and non-discriminatory, actively working to reduce, rather than amplify, existing health disparities and societal biases. A critical concern for LMICs is the presence of “baked-in biases” in health training data, often reflecting historical discrimination, socio-economic disparities, or underrepresentation of certain populations in medical research. For example, medical AI models trained predominantly on genomic, imaging, or clinical data from specific racial, ethnic, or socioeconomic groups might exhibit reduced diagnostic accuracy or provide biased treatment recommendations when applied to other diverse LMIC populations. The historical use of “race-based modifiers” in clinical algorithms for renal function or pulmonary function, which can disadvantage certain racial groups in healthcare access or treatment, underscores the urgency of this principle. Policies must mandate:

- Diverse and Representative Health Data Collection: Prioritizing the collection of high-quality, diverse, and contextually relevant local health data (including demographic, epidemiological, and clinical data) that accurately represents all segments of the population, including marginalized and vulnerable groups.

- Algorithmic Auditing for Health Bias: Implementing rigorous, ongoing processes to identify and quantify biases in AI models and their health-related outputs, particularly concerning diagnosis, risk prediction, and treatment recommendations.

- Dynamic Bias Mitigation in Clinical AI: Developing and deploying AI models that can dynamically recognize and adapt to biases in health training data, potentially by incorporating mechanisms that challenge underlying assumptions or by allowing for human-in-the-loop adjustments based on local clinical context and patient characteristics.

- Community-Driven Health AI Validation: Engaging affected communities, patient advocacy groups, and local healthcare providers in the validation and refinement of AI health tools to ensure fairness, cultural appropriateness, and clinical utility.

ACCOUNTABILITY AND MULTI-SECTORAL HEALTH GOVERNANCE

Effective AI governance in public health and healthcare in LMICs requires a multi-sectoral approach involving government health ministries, academia (e.g., medical schools, public health institutions), civil society organizations (e.g., patient groups, community health advocates), and the private health sector (e.g., pharmaceuticals, medical device companies, health tech startups). This principle establishes clear lines of accountability for the design, development, deployment, and oversight of AI systems in health. Governments play a crucial role in developing national health AI strategies, regulatory frameworks for medical AI, and ethical guidelines that align with national health priorities and international human rights standards. Academia contributes through health research, medical education, and independent auditing of AI’s clinical impact. Civil society organizations ensure community representation, advocate for patient rights, and monitor AI’s societal impact on health equity. The private sector, both local and international, is responsible for responsible innovation, adherence to health regulations, and ethical data practices. This collaborative governance model ensures that AI development in health is guided by collective values and that responsibilities are clearly defined throughout the AI lifecycle, from research to clinical implementation.

The power to challenge health outcomes from AI systems – including those that are biased or unjust – must be baked into the governance framework for dynamic systems, empowering patients and communities to question and seek redress.

SUSTAINABLE DEVELOPMENT AND LOCAL PUBLIC-PRIVATE PARTNERSHIPS IN HEALTH

The development and deployment of AI in LMICs should be viewed as an integral part of sustainable national health development strategies. This necessitates fostering robust local public-private partnerships within the health sector. Governments can create an enabling environment through supportive health policies, regulatory sandboxes for health tech innovation, and strategic investments in digital health infrastructure. The private sector brings innovation, capital, and technical expertise. These partnerships are crucial for:

- Health Infrastructure Development: Collaborating on building and maintaining the necessary digital health infrastructure, including electronic health record systems, telemedicine platforms, and secure data networks.

- Human Capital Development in Health AI: Jointly investing in education, training, and skill development programs tailored to AI for health professionals (doctors, nurses, epidemiologists, public health workers), as well as data scientists and AI engineers with health domain knowledge.

- Expertise Transfer and Localization: Facilitating the transfer of health AI knowledge and technology in a way that builds local capacity and promotes self-sufficiency in health tech development, rather than dependency.

For example, a public-private partnership could develop AI tools for community-based health practices, leveraging social network analysis to understand health behaviors or optimize resource distribution. This aligns with the “common good” by ensuring that AI supports collective well-being, such as ensuring children get vaccinated or optimizing resource allocation for farmers occupational safety based on health and agricultural needs. Furthermore, AI agents, like the maternal health chatbot, can extend the reach of public health services by acting as “digital social workers,” providing information on available health programs (e.g., nutrition support, child health programs), and guiding users to where they can access these services, while carefully defining the AI’s “scope of practice” to ensure complex medical or social cases are escalated to human professionals.

CONCLUSION

Designing, governing, and implementing AI in LMICs for public health and healthcare demands a nuanced and context-sensitive approach. By adhering to principles of human-centricity, local ownership, safety, transparency, equity, accountability, and sustainable partnerships, LMICs can harness AI’s transformative power to address their unique health challenges, accelerate health development, and build more resilient, equitable, and prosperous societies. This requires continuous dialogue, adaptive policy-making, and a shared global commitment to ensuring AI benefits all of humanity.

Conflict of Interest Statement:

None.

Funding Statement:

None.

Acknowledgements:

None.

REFERENCES

- Andrade BB, Reis-Filho A, Barros AM, et al. Towards a precise test for malaria diagnosis in the Brazilian Amazon: comparison among field microscopy, a rapid diagnostic test, nested PCR, and a computational expert system based on artificial neural networks. Malar J 2010; 9: 117.

- Alderman, J. E. et al. Tackling algorithmic bias and promoting transparency in health datasets: the STANDING Together consensus recommendations. Lancet Digit. Health 7, 2025. e64 e88.

- Beaglehole, R., & Bonita, R. What is global health?. Global health action, 3, 2010. 10-3402.

- Browne J, Coffey B, Cook K, Meiklejohn S, Palermo C. A guide to policy analysis as a research method. Health Promot Int. 2019 Oct 1;34(5):1032-1044. doi: 10.1093/heapro/day052. PMID: 30101276.

- de Laat, P.B. Algorithmic Decision-Making Based on Machine Learning from Big Data: Can Transparency Restore Accountability?. Philos. Technol. 31, 2018. 525 541. https://doi.org/10.1007/s13347-017-0293-z

- Shin,D. & Y. J. Park, Role of fairness, accountability, and transparency in algorithmic affordance, Computers in Human Behavior,Volume 98, 2019, Pages 277-284, ISSN 0747-5632, Doi: .

- Elveren E, Yumuşak N. Tuberculosis disease diagnosis using artificial neural network trained with genetic algorithm. J Med Syst 2011; 35: 329 32. 32 Osamor VC, Azeta AA, Ajulo OO.

- Fletcher RR, Nakeshimana A and Olubeko O. Addressing Fairness, Bias, and Appropriate Use of Artificial Intelligence and Machine Learning in Global Health. Front. Artif. Intell. 2021. 3:561802. doi: 10.3389/frai.2020.561802

- Giovanola, B., & Tiribelli, S. Beyond bias and discrimination: redefining the AI ethics principle of fairness in healthcare machine-learning algorithms. AI & society, 38(2), 2023. 549-563.

- Hosny, A., & Aerts, H. J. Artificial intelligence for global health. Science, 366(6468), 2019. 955-956.

- Howitt, P.,Darzi,A., Yang,G. Z.,Ashrafian, H.,Atun, R., Barlow, J., … & Wilson, E. Technologies for global health. The Lancet, 380(9840), 2012. 507-535.

- Jacobs, J. Systems of survival: A dialogue on the moral foundations of commerce and politics. Vintage Books. 1994. 236 pp.

- Kuok CP, Horng MH, Liao YM, Chow NH, Sun YN. An effective and accurate identification system of Mycobacterium tuberculosis using convolution neural networks. Microsc Res Tech 2019; 82: 709 19. 31

- Lakoff, A. Two regimes of global health. Humanity: An International Journal of Human Rights, Humanitarianism, and Development, 1(1), 2010. 59-79.

- Lepri, B., Oliver, N., Letouzé, E. et al. Fair, Transparent, and Accountable Algorithmic Decision-making Processes. Philos. Technol. 31, 2018. 611 627. https://doi.org/10.1007/s13347-017-0279-x

- Mannuru, N. R., Shahriar, S., Teel, Z. A., Wang, T., Lund, B. D., Tijani, S., … & Vaidya, P. Artificial intelligence in developing countries: The impact of generative artificial intelligence (AI) technologies for development. Information Development, 2023. 02666669231200628.

- Mazzucato, M. The value of everything: Making and taking in the global economy. Hachette UK. 2021. 203pp

- Mendenhall, E., Kohrt, B. A., Norris, S. A., Ndetei, D., & Prabhakaran, D. Non-communicable disease syndemics: poverty, depression, and diabetes among low-income populations. The Lancet, 389(10072), 2017. 951-963.

- Norori, N., Hu, Q., Aellen, F. M., Faraci, F. D., & Tzovara, A. Addressing bias in big data and AI for health care: A call for open science. Patterns (New York, N.Y.), 2(10), 2021. 100347. https://doi.org/10.1016/j.patter.2021.100347

- Qureshi, S. Creating cycles of prosperity with human digital development for intelligent global health. Information Technology for Development, 28(4), 2022. 649- 659.

- Qureshi, S. Cycles of development in systems of survival with artificial intelligence: a formative research agenda. Information Technology for Development, 29(2-3), 2023. 171-183.

- Rogers, C. C., Jang, S. S., Tidwell, W., Shaughnessy, S., Milburn, J., Hauck, F. R., & Valdez, R. S. Designing mobile health to align with the social determinants of health. Frontiers in Digital Health, 2023. 5, 1193920.

- Sangers, T., Reeder, S., van der Vet, S., Jhingoer, S., Mooyaart, A., Siegel, D. M., & Wakkee, M. Validation of a market-approved artificial intelligence mobile health app for skin cancer screening: a prospective multicenter diagnostic accuracy study. Dermatology, 238(4), 2022. 649-656.

- Schwalbe, N., & Wahl, B. Artificial intelligence (AI) and global health: how can AI contribute to health in resource-poor settings?. BMJ global health, 3(4), 2018. e000798.

- Xu, Z., Biswas, B., Li, L., & Amzal, B. AI/ML in Precision Medicine: A Look Beyond the Hype. Therapeutic Innovation & Regulatory Science, 2023. 1-6.