Practice Effects on Cognitive Abilities: Key Insights

Effects of practice on individual difference in tasks employed to assess cognitive abilities

Daniel Gopher1, Daniel Ben-Eliezer2, Paul D. Feigin3

- Professor Emeritus of cognitive psychology and human factors engineering; Faculty of Data and Decision Sciences; Technion-Israel Institute of Technology. Haifa

- Post-doctoral student in cognitive psychology; Faculty of Data and Decision Sciences.; Technion Faculty of Industrial Engineering 2018 (cognitive psychology, human factors)

- Emeritus Professor of Statistic; Faculty of Data and Decision Sciences; Australian National University: PhD 1975

OPEN ACCESS

PUBLISHED: 31 January 2025

CITATION Gopher, D., et al., 2025. Effects of practice on individual difference in tasks employed to assess cognitive abilities. Medical Research Archives, [online] 14(1).

COPYRIGHT © 2025 European Society of Medicine. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

DOI: https://doi.org/10.18103/mra.v14i1.7058

ISSN 2375-1924

ABSTRACT

The study examines the influence of the format and features of tasks employed to assess cognitive abilities. Three experiments investigated the effect of practice on performance differences between performers of these tasks. Two experiments were conducted on a computerized, demanding task, developed to assess attention management and multitask performance. The third experiment examined practice effects in six replications of a five tasks battery, consisting of tasks commonly used in the evaluation of cognitive functions. Significant individual differences were observed in all experiments, within and across tasks. However, practicing differentially affects tasks and components within tasks. Three types of practice effects on performance were identified: (1) Performance levels did not change and did not benefit from practice in components which mainly draw upon the operation of bottom-up, exogenous attention systems; (2) Practice had strong asymmetric effects on performance when performance required to resolve a conflict between two automatically attended elements. In these tasks, performers with lower initial performance scores benefited more from practice than those with higher initial performance levels. (3) For tasks in which executive control and working memory were called upon but there was no conflict to resolve, significant practice effects were obtained with equal gains for performers differing in initial performance levels. The important implication of the obtained results is that when a task is developed as test to evaluate a cognitive ability, its format and features may affect first session performance and only clarified with practice. The contribution of format and components are discussed with reference to research on the evaluation of individual differences in cognitive abilities, types of attention demand and working memory requirements.

Keywords: Practice effects, cognitive tasks, Individual differences, attention systems

INTRODUCTION

Individual differences in cognitive abilities are evaluated across a wide range of applications and problem areas. Examples are: admission to education and training programs (Oren et al., 2014; Kleper, & Seka, 2017); job selection (Gopher, 1982; Charles & Florah, 2021); developmental progress assessments (Friedman et al., 2016; Karbach et al., 2017); aging (Salthouse & Ferrer-Caja, 2003; Eich et al., 2016); brain dysfunctions (Bialystok et al., 2008; Tanguay et al., 2014); rehabilitation (Folstein et al., 1975; Lucas et al., 1998). The increasing interest and focus have been accompanied by the introduction of a growing number of commercial cognitive test batteries, which are employed to assess individual differences in cognitive abilities and functions (e.g., MoCA, CANS-MCI, MMSE, DRS-Mattis, USC-ADRC). What is common to these batteries are the conduct of a single or two test sessions, within which participants perform once or twice an arsenal of specified cognitive tasks. Scores of each participant are compared to a group, designated populations, norms, or criteria). Cutoff points are set and decisions drawn based upon these scores. However, it is well recognized that although statistically significant, the correlations between test and criteria usually fall in the .3 to .7 range, thereby reducing the effectiveness of the test as a sole measure of the targeted cognitive ability (Salthouse, 2005; Rose et al, 2015; Kabat et al, 2001). One possible reason for lower correlation is that there may be other contributing factors in addition to the ability measured by the test. A second possible contributing factor stems from the fact that in addition to its prime intended objective, each test is a task enveloped and influenced by its specific format, sensory features, perceptual and response modes, as well as instructions and performance procedures. Researchers of individual differences in cognitive abilities have been aware of this fact. In the theoretical and conceptual frameworks which have been developed to specify types and dimensions of cognitive ability, single tasks have been grouped and associated through their loading on underlying factors, dimensions or cognitive structure. Each of the tasks has been shown to be mainly loaded on one underlying cognitive dimension but may also correlate with other dimensions (Miyake et al., 2000; Salthouse, 2005; Ackerman & Cianciolo, 2000). One common approach to group tasks is factor analysis. A good example is the multiple studies conducted by Salthouse and his colleagues (Salthouse, 2004, 2005). They proposed an analytical model with five cognitive functions: vocabulary, reasoning, space, memory and speed. Each was mapped to a group of 3-4 tests, from sixteen individual cognitive tasks (e.g., Reasoning, Ravens, Shipley Abstractions, Letter Set). Correlational and factor analysis computed the loading of each test on its mapped cognitive dimension. Dimension levels were then compared along age and gender. A later study (Salthouse, 2005) applied the same format and added tasks of executive control (task switching, inhibition and working memory). A related second approach is latent variable analysis, developed and investigated in multiple studies by Miyake, Friedman and their collaborators (Miyake et al., 2000; Miyake, & Friedman, 2012; Friedman, & Miyake, 2017). The focus of this group has been on executive control processes. Accordingly, they proposed three control functions: updating, shifting and inhibition. Each mapped into a group of tasks given to subjects (e.g., shifting between colors, numbers, category). They employ confirmatory factor analysis (CFA) and structural equation modeling (SEM) to compute the correlation between tasks and their respective latent dimension, as well as the interrelations between the three control functions, representing the unity of executive control. The present study examines whether lack of practice could be a contributor to the lower correlation of a single task performance measure with its targeted intended cognitive ability. As indicated, in cognitive test batteries, each test is commonly presented once or twice, but there is no practice or training. No or limited practice is also common to experimental studies developed to distinguish between groups in a cognitive ability measure. However, when first presented with the targeted task, task performance may reflect the targeted ability but also the experience with one or many of the task format and features, which may be relevant or irrelevant to the measurement of the targeted ability (e.g. working memory). How will the difference between performers on task performance change with practice? Practice is in providing a common experience on the task to reduce the effects of initial variability due to previous experience with its features and format. If performers scores improve above a set criterion level, or performers change their relative position up or down in the tested group, what will be a more valid estimate of the cognitive function targeted by the task? Overall, practice may have four possible effects on performance: (1) If there is no practice effect, then task performance before and after practice equally reflects individual differences in the task. (2) Practice improves the performance of all individuals and there is equal gain for first session high and low performers. In this case, the absolute performance levels following practice are important. For example, in the evaluation of cognitive impairment level, developmental progress and meeting selection criteria, both final performance levels and rate of improvement are informative. Types 3 and 4 of effects, consider possible interaction between evaluation of individual differences in the first and last administration of the test. (3) If lower performers in the first session gain more from practice than higher initial performers, they improve their performance and position in the tested group relative to their rank in the first session. Thus, when assessing individual differences, practice may change both performance levels and relative rankings of low compared to high initial performers. (4) The opposite case is where practice causes higher initial performers to gain more than low initial performers. In this case, practice does not change the relative position of individual subjects but increases the spread of the initial performance differences between high and low performers observed during the first administration of the task. Taken together, in all four cases practice may inform us of the nature of the task constructed and its employment as an estimate of its targeted cognitive ability. Differential influence of practice on task performance has been reported in training research which investigated the influence of training manipulations on different ability groups (Frederiksen & White, 1989; Gopher, Weil & Siegel 1989). A more recent line of experiments studied the relationship between task switching training and individual differences by comparing before and after practice cognitive ability tests, reporting asymmetric effect of training on individual differences in cognitive tasks (Karbach et al., 2017; Zinke et al., 2012; Zinke et al., 2014; Karbach & Kray, 2009; Bherer et al., 2008; Cepeda et al., 2001). They showed that individuals with lower cognitive abilities at pretest showed larger training and transfer benefits after the training. They labelled it compensation effect, in which baseline cognitive abilities were negatively correlated with the training-induced gain (i.e., low-performing individuals benefitted more). Similar compensation effects were reported for working memory, executive control and a variety of cognitive tasks. In all cases training gains were compared with a “no training” control group (Zinke et al., 2012; Zinke et al., 2014; Karbach et al., 2009; Beherer et al., 2008). Karbach et al. (2017) tested three age groups (children, young and older adults). Zinke et al (2012) tested older adults (age 77-96), and in Zinke et al (2014) the sample ages were between 65 to 95 years. Despite significant differences in average performance between age groups, the compensation effect was found in all of them, thus eliminating ceiling or regression to the mean explanations of the training effect (as also described in: Konen & Karbach, 2021). Opposing the compensation influence of training, there is also a line of studies reporting positive magnification effects. That is, subjects with high initial ability, benefit more from training than low ability subjects, leading to positive rather than negative correlation between intercept and training slope (Baltes & Kliegl, 1992; Brehmer et al., 2007; Foster et al., 2017; Lindenberger et al., 1992; Lövdén et al., 2012; Verhaeghen & Marcoen, 1996). These studies investigated the training of high and low working memory subjects when teaching them strategies and techniques for improving working memory span (e.g., mnemonics). Positive correlations imply that in terms of cognitive testing, the scores and individual differences obtained at the first presentation of test, are a good representation of the differences between subjects on the targeted cognitive ability (e.g., working memory span, processing speed). In this case, training would clarify and increase the range and the distinction between individuals but would not change their relative position. However, if test performance is used with reference to a cutoff point, diagnosis or graduation criterion, training may change the status of individuals. In this regard, the question again is what the added value of the post-training is compared with the pre-training score. It is important to emphasize at this point the difference between training and practice. Training studies investigate the influence of training manipulation or intervention on performers differing in their initial task performance levels. In practice only there is no manipulation, performers accumulate repeated spontaneous experience on a task format and features as is. Practice performance slope and the first and last performance levels are compared. Training applies to the influence of teaching or instruction method, which most often is compared with control groups and with transfer to external tasks. Hence, compensatory and magnification training studies are supportive but different from the investigation of the effects of practice on targeted tests performance levels. We report the results of three experiments, which investigated the influence of practice on performance and differences between performers. Two experiments were conducted on two versions of the computerized Breakfast Task, developed by Craik and Bialystok (2006) to test attention management and multitask performance in a simulated daily mission. Subjects in both experiments were given five sessions of practice. The third experiment examined six replications of a cognitive test battery comprising five commonly used cognitive tests.

TASKS AND METHODS

BREAKFAST TASK (ALTERNATING SCREENS)

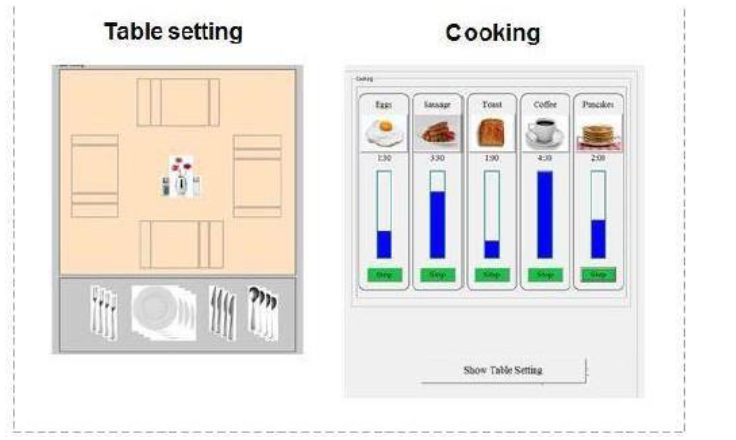

It is a computer-based simulation, in which the performer is required to cook five food items for breakfast, while concurrently setting as many tables as possible for four guests (Figure 1). In its original form, it was developed by Craik and Bialystok (2006) as an indicator of coping with multitasking, high executive control and attention management demands. It also aspired to simulate a daily activity. They employed the task to compare planning and attention management capabilities of young and older adults and contrasted monolingual and bilingual performers in each age group. Older bilinguals showed advantage over older monolinguals. In later studies, the task was applied to compare young, older and Parkinson’s disease patients (Bialystok et al., 2008) and to evaluate the impact of brain injury (Tanguay et al., 2014). In all their experiments they noted the performance difference between cooking and table setting, but their main interest was in the comparisons between specified groups. The task was conceived as an integrated index of multitasking ability and only limited, or no practice was given. All studies compared group averages, with considerable variability in each average, indicating individual differences. Task difficulty was increased, but there was no administrated practice.

Participants:

Thirty participants were recruited to a five-session practice program. All participants were students of the Technion, aged between 19 and 29 (mean age: 22.8). 60% of them were females and had normal or corrected vision. After finishing the task, participants received 250 NIS (about 70 USD). Three participants who received the highest combined standardized scores in a session received 50 NIS bonuses for the five sessions. Participants were recruited to the experiment by responding to a post addressed to students of the Technion which included an online questionnaire. Candidates with excessive experience of playing daily video or computer games for three hours or more and those with very limited computer use were excluded. All participants gave written informed consent according to the declaration of Helsinki guidelines, as approved by the Technion ethics committee.

Design:

Participants were required to cook food for breakfast while concurrently setting tables for four guests (Figure 1). In the cooking segment, participants were asked to cook five food items; cooking time in minutes for each item was displayed (coffee 4.5, sausage 3.5, pancakes 2, egg 1.5, and toast 1). Each cooking round started with the longest duration item (coffee) and additional food items were added from among the other four. Ideally, food items should be accurately cooked and all items completed together, cooking times should be ordered from long to short items and the starting time of each should be calibrated relative to the starting time of the previous food item. The barographs in Figure 1 indicate the optimal starting time for each food item to be accurately cooked and served together.

Three performance aspects were measured. Cooking Time Discrepancy (DIS): the average absolute values of the differences between the required and actual cooking times of each item. Range of stop times (RST); the difference in seconds between the first and last food item to complete cooking; For table setting (TS), participants had to set tables for four guests, which included placing the plate, fork, knife and spoon in their locations. Table setting could follow two placement rules: By guest the complete set should be placed for a guest, before proceeding to the next guest; or by utensil each type of utensil should be placed for all four guests (i.e.). The program did not allow putting a utensil in the wrong place. When a table setting is completed for four guests, a new table is presented. Table setting scores counted only fully set tables based on placement instructions. Feedback on DIS, RST and TS were displayed at the end of each cooking round.

Procedure:

Following signing an Informed Consent form, each participant received a personal username allowing them to log in to the Breakfast Task server online and perform the task from their home. In the first login, they filled in a demographic inventory, read instructions, and performed two demonstration trials. They were then instructed to finish their five practice sessions within 14 days and were prevented from performing two sessions in one day. The research assistant was in continuous touch with participants via phone, monitoring their progress and providing technical assistance.

Practice included five sessions, each with eight cooking rounds each lasting 4.5 minutes (40 rounds total) and with identical structure: Cooking five food items, presented on the same screen and setting tables for four guests, with alternating setting rule three tables by guest followed by three tables by utensil and so on; the table setting and cooking display screens were alternating (Craik & Bialystok, 2006, condition 2), participants had to click on a button, to switch between the table and cooking screens. Cooking and table setting performance were instructed to be of equal priority and task performance is evaluated by the combined performance on both.

BREAKFAST TASK (SIX SCREENS)

Task and design:

Five sessions of the Breakfast Task were administered identically as in the first Experiment, with one major difference: alternating between tables display but only one of five food items, by clicking on its image in the cooking display (i.e., only one of six options were displayed on the screen at a time – table setting or one of the five food items). Hence, more switching and much increased attention management were required. Will these changes affect the distinction between measures and the influence of practice on the differences between performers? These are the main questions of the second experiment.

Participants:

Thirty-four participants were recruited. Their recruitment process and screening were identical to those described in Experiment 1. All participants were students of the Technion, with native Hebrew language level. They aged between 21 and 33 (mean age: 26.0); 42% of them were females. Two participants were excluded from the analysis, due to not completing all the training program in the required duration and schedule. Thus, 32 participants were included in the analysis. After finishing the task, participants received 250 NIS (about 70 USD). Three participants, who received the highest combined standardized scores in a session, received 50 NIS bonuses for the first five sessions.

A BATTERY OF FIVE COGNITIVE TASKS

Tasks and design:

This experiment was designed to investigate the effect of practice on individual differences in cognitive tests using the administration format of cognitive test batteries. A battery was constructed with five commonly used cognitive tasks. It was administered and replicated in six sessions, representing practice.

The selected tasks are generally less complex than the Breakfast Task, but the format and structure of each combines several elements. How would performer differences in tasks be affected across the six replications? In addition, to examine the relationship between the cognitive tasks and Breakfast Task performance, two sessions of the six-screen version of the task were administrated one before and one after the six replication sessions of the cognitive task battery.

The first selected task for this experiment was the ANT developed by Posner (Posner and Rothbart, 2007). Although it is not a common member of cognitive test batteries, it is important because despite being a simple and discrete task, it was specifically constructed to separate the effects of exogenous and endogenous attention systems on single reaction times. This separation of effects is like the observed distinction in the performance of the Breakfast Task between the local monitoring food accuracy factor (exogenous attention) and the executive control factor of table setting and synchronous serving (endogenous attention). Will the different ANT measures preserve their distinctiveness following practice?

Two additional tasks are working memory tasks, the N-back representing verbal working memory (Kane, Conway Miura, Colflesh, 2007; Jaeggi, Buschkuehl, Perrig, Meier, 2010) and the Corsi Block Tapping representing visual spatial working memory (Kessels Zandvoort Postma Kappelle Haan, 2000; Arbuthnott & Frank, 2000). Both are widely used in cognitive test batteries and experimental research. In neuropsychology, Corsi has been linked to the right hemisphere specialization and the N-back to the left hemisphere (Kessel et. al. 2000). The two types were also separated in Baddely’s (2012) model of working memory (WM). Nonetheless, there has been accumulated research that shows that not only working memory load, but additional variables influence performance in the two tasks. For N-back, those variables include familiarity with the erroneous stimulus in the sequence (Kane et. al. 2007) and the included processes of decision, selection, inhibition, and interference resolution (Jaeggi et. al. 2010). Corsi does not only distinguished by being a measure of visuospatial rather than verbal memory but also relies heavily on memory for temporal information (Kessel et al. 2000). For both tasks, these variables reflect the features and format in which the tasks were structured and administered to evaluate the targeted WM ability.

Trails is the fourth selected task, including the A and B versions of the task. Trails A screen contains only single digits or letters presentations. Participants are requested to draw a line connecting the first to the last value stimulus, scattered on the screen. Trails B screens display both digits and letters and participants are requested to draw a connecting line, alternating between digits and letters in order. Both Trails versions were shown to equally reflect perceptual speed and fluid intelligence (Salthouse 2005). Trails B performance was also shown to include the additional costs of executive control and task switching (Salthouse 2011; Sanchez-Cubillo et. Al 2009; Arbuthnott & Frank, 2000). Thus, while performance of both A and B task versions is associated with the same basic processing demands, new elements and format of the B version introduce new demands that influence performance.

The fifth task in the cognitive battery is the Digit Symbol Substitution Task (DSST). It has been adopted from the Wechsler intelligence battery. It is a paired association task, and performers are required to match digits with associated geometric symbols. The task was shown to be sensitive to impairments and improvement in processing speed (Hoyer et. al., 2004). DSST performance was shown to correlate with real-world functional outcomes (e.g., the ability to accomplish everyday tasks) and recovery from functional disability. In addition, the DSST has been demonstrated to be sensitive to change in cognitive functioning in patients with MDD and may offer an effective means to detect clinically relevant treatment (Jaeger, 2018). A detailed description of the 5 tasks is presented in Supplementary material A.

Supplementary material A: Cognitive Battery tasks

The cognitive battery included five tasks replicating six times (sessions), in the same format and order. All five tasks were remotely accessed by the participants on their PCs at home, using a downloaded Millisecond Inquisit ® psychological tasks, on-line library (Inquisit 5, 2016). The five tasks were translated into Hebrew (stimuli and instructions) and shown in fixed order as in their short description below.

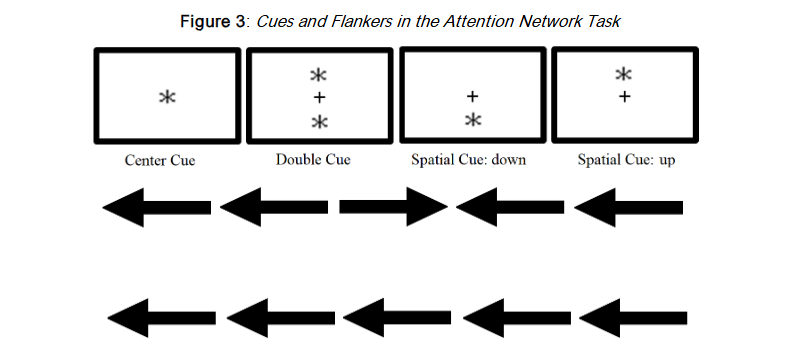

Attention Network Task (ANT): The ANT task was specifically designed to distinguish and study the cost and interrelations between exogenous (alert, orient) and endogenous (conflict resolution) attention systems (Posner & Rothbart, 2007)³⁷. It was selected because of its distinction between exogenous and endogenous categories of attention demands, which are closely associated with the local monitoring and executive control demand types of the Breakfast Task. It has been widely studied and modeled to support the distinction between exogenous and endogenous systems (Posner & Rothbart, 2007³⁷; Peterson and Posner, 2012⁵³). It is a computerized task. It displays a central arrow flanked with arrows indicating direction with congruent and incongruent arrows compared to the central arrow. The participant needs to indicate whether the central target arrow is pointing rightward or leftward (and typing “I” or “E”, respectively). Participants were told that the task measured attention and were instructed to perform as fast and as accurately as they could. They were informed about the roles of cues and flankers and were encouraged to use them. The task aim was to capture three attention dimensions following Posner’s attention model (MacLeod et al., 2010)⁵⁴. The calculations of these three are based on differences of experimental conditions varying in the congruence of flanker arrows and spatial cues. The alert effect is calculated by mean response time of no cue conditions minus the response time of double cue conditions; Orienting effect is calculated by mean response time (RT) of central cue trials minus mean RT of spatial (up and down) cue conditions; the conflict effect was calculated by mean RT of all incongruent flanker conditions minus all congruent flanker conditions. The Cues and flankers are shown in Figure 6. In addition to them, no cue condition and no flankers condition (only a single arrow) existed also (Fan et al., 2002)⁵⁵.

Although simpler and less demanding than the Breakfast Task, performance on all tasks of the cognitive battery was shown to be influenced by variables that reflect the format in which the tasks were structured and administered, to represent the targeted cognitive ability. Will such an influence also be observed in the effects of practice? The results obtained in the two experiments with the Breakfast Task demonstrated that the dominance and differential effect of practice depend on the nature and basic demand of the specific subcomponent of the task, over and beyond manipulations of global task demands. Using these findings as an anchor point, we hypothesize that specific components within tasks may vary in demand and consequently incur a differential effect of practice on task performance and influence the differences between performers.

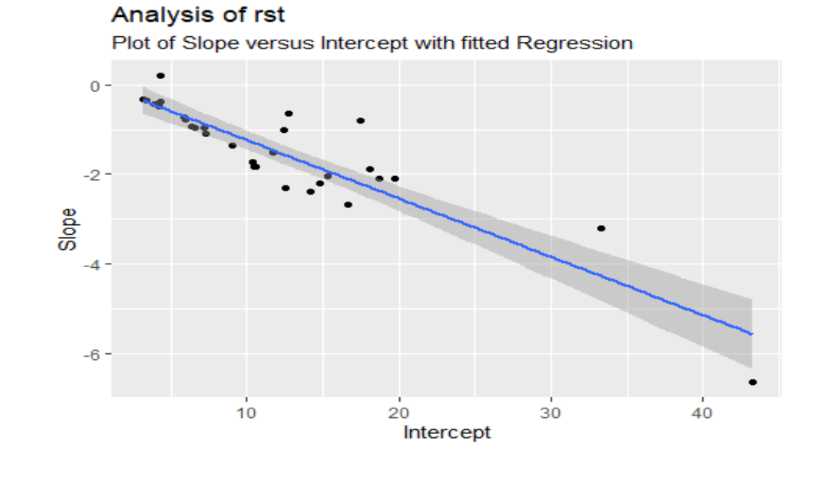

Scatterplot of Intercept (x-axis) and Slope (y-axis) for RST, with Computed Regression with Negative Slope, Using Mixed Model Estimates for the 30 Subjects.

Each stimulus consisted of 2,000 Ms. inter stimulus interval (ISI) 100 Ms. cue, 400 MS interval between cue and target, and 1700 MS. target (or less if answered within time). Following a practice trial of 24 blocks with feedback, the task consisted of 24 blocks of 96 trials each with no feedback. Each trial was composed of two repetitions × four cue conditions × three flanker conditions × two target positions × two target directions. Within each 96 trials block, trials were distributed randomly (Fan et al., 2002)⁵⁵.

Trail Making task – The trail task has been employed as an indicator of processing speed and fluid intelligence (Salthouse, 2005¹³, 2011¹³). Two versions of the task were given. The first (“Trail A”), included only numbers in ascending order, in which participants were required to draw a line connecting the first circle (numbered “1”) to the last (numbered “25”). Circles were scattered around the screen. The second Trail version (“Trail B”) included both numbers and letters in ascending order and alternately; participants were requested to draw a connecting line, alternating between numbers and letters in order, 1 – a – 2 – b – 3 – c, etc. Both Trail test versions included 25 circles (numbers or numbers and letters, with Trail B ending on “13”; Gaudino et al., 1995)⁵⁶. Before each of the trails, there were short sample trials for training; participants were instructed to perform them as fast as they can. The program indicated when participants erred and coerced them to resume from the last correct step.

The number-letter version is considered to require more cognitive effort than the numbers only version requiring visual search, motor control as well as inhibition and executive control (Arbuthnott & Frank, 2010)⁴¹. The trail task was computerized, and the paths were drawn by a mouse hovering movement through the circles and between them.

Corsi Block-tapping task is a computerized task assessing capacity of visuospatial working memory (visual memory span). The participants saw nine boxes in fixed locations on which a sequence is created by lighting different boxes one by one, in increasing sequence length (two different sequences for each span). The maximal sequence possible was of nine boxes; the task was adaptive, so after failing to recall a sequence twice it was terminated. Participants were asked to recall each sequence and repeat it immediately after it’s done. The task is commonly used and is considered as the visual analogue for the digit span task (Kessels et al., 2000)⁴⁰.

N-back task is a common, demanding, phonological working memory task including aspects of executive control in which participants are required to decide for every letter presented in a sequence whether it is the same or different from the letter presented in the sequence N letters before. The value of N may refer to the previous letter (as in N = 1), 2 (as in N = 2) or even 3 letters before the letter (as in N = 3). For instance, in a N = 3 sequence condition: D, N, Y, D, K, Y, … one should answer “yes” for the second D and the last Y (both in bold) because three items before an identical letter was presented (both with underline). Zero value of N refers to the very first letter in the sequence (so it is fixed for each N = 0 sequence, thus any time the letter was presented along the sequence the participants should respond positively). There were three blocks for each level of N (0, 1, 2, or 3) with a 20 letters sequence per block. (Kane et al., 2007)³⁸. Each letter was presented for 500 Ms., and the ISI was 2,500 Ms. Participants were asked to click “A” when the target was shown. The task yielded scores for number of hits, number of false alarms and discrimination value (hits minus false alarms, divided by number of blocks).

Digit Symbol substitution task is a part of the Wechsler intelligence battery (WAIS). Nine digits (1 – 9) are substituted by respective nine fixed meaningless symbols (using an injective function). Using a conversion key visible along the task, participants were required to replace, as fast and as accurately as they could, a sequence of digits, by the nine corresponding symbols. The conversion key (a table with the nine digits and the nine symbols underneath them) was presented in a designated box along the task (Wechsler, 1997)⁵⁷. In the computerized task, the symbols switched roles with the digits, so

RESULTS

BREAKFAST TASK (ALTERNATING SCREENS)

The results are presented considering the three questions. Note that observations on an individual trial that had values 2.5 standard deviations worse than the average for a given session were removed as outliers. Less than 2.5% of data were considered outliers.

| Session | RST | DIS | TS |

|---|---|---|---|

| Session 1 | 13.31 (10.37) | 1.90 (1.24) | 7.34 (1.09) |

| Session 2 | 6.57 (5.91) | 1.93 (1.24) | 8.05 (1.57) |

| Session 3 | 6.71 (6.38) | 2.74 (3.12) | 8.32 (1.99) |

| Session 4 | 5.12 (4.97) | 2.67 (4.00) | 9.17 (1.51) |

| Session 5 | 6.35 (6.38) | 2.05 (1.87) | 9.32 (1.70) |

Note. Averages and SDs of Cooking Discrepancy (DIS), range of stop times, (RST = inaccuracy of synchronous serving time), Table setting (TS=number of correct tables set). SDs calculated from subject averages (over trials) are shown in parentheses.

INDIVIDUAL DIFFERENCES

The comparative fixed and mixed analysis of results showed significant individual differences in all three performances measures: Discrepancy (χ32 = 104.45, p < .001), RST (χ32 = 283.77, p < .001) Table Setting (χ32= 651.06, p < .001).

PRACTICE EFFECTS

Discrepancy: no significant average practice effects (t (41.4) = 0.850, p = .400). RST: significant practice (session) effects (t(29.8) = -4.56, p < .001). Table setting: a very significant practice effects (t(29.8) = 10.68, p < .001).

CORRELATION BETWEEN INITIAL ABILITY AND PRACTICE

The correlation between subjects slope of practice and intercepts were: Discrepancy: not significant (χ1 2 =1.58,p=0.21); RST: (r=-.87), highly significant negative (χ1 2 = 25.3,p < .001). Table Setting: not significant (χ1 2= 1.69, p = 0.194).

BREAKFAST TASK (SIX SCREENS)

| Session | RST | DIS | TS |

|---|---|---|---|

| Session 1 | 24.32 (19.20) | 3.83 (2.85) | 7.46 (1.74) |

| Session 2 | 15.21 (10.52) | 4.1 (3.11) | 8.38 (2.02) |

| Session 3 | 15.61 (11.54) | 3.72 (3.06) | 9.07 (2.06) |

| Session 4 | 14.44 (10.10) | 2.77 (2.13) | 9.55 (2.52) |

| Session 5 | 11.60 (6.86) | 4.28 (3.49) | 9.67 (2.54) |

Note. Averages and SDs of Cooking Discrepancy (DIS), range of stop times, (RST = inaccuracy of synchronous serving time), Table setting (TS=number of correct tables set). SDs calculated from subject averages (over trials) are shown in parentheses.

INDIVIDUAL DIFFERENCES:

The comparative fixed and mixed analysis of results showed significant individual differences in all three performance measures: DIS (χ32 = 215.05, p < .001), RST(χ32 = 542.94, p < .001), TS (χ32 = 1142.6 p < .001).

PRACTICE EFFECTS:

DIS, no significant practice effect was found (t (32.0) = -0.305, p=.763). RST, practice effects were significant (t (32.1) = -4.385, p<.001). TS, practice effects were significant (t (32.0) = 6.542, p<.001).

CORRELATION BETWEEN INITIAL ABILITY AND PRACTICE:

DIS, marginally significant negative correlation (r = -0.50) between subjects intercept levels and practice slope, (χ2(1) = 3.095, p = 0.079). RST, highly negative correlation (r= -0.95) between subjects intercept and practice slope (χ2(1) = 57.38, p < .001). Initial low performers gained more from practice than higher performers. TS, no significant correlation between subjects intercept levels and practice slope (χ2(1) = 1.158, p = 0.282).

COGNITIVE TASKS BATTERY

We first present the results and analyses conducted on the cognitive tests battery. We then examine its relationship to breakfast task performance.

| Session | Alerting effect | Orienting effect | Conflict effect | Trail A | Trail B | Corsi Block | N back (n = 31) | DSS |

|---|---|---|---|---|---|---|---|---|

| Session 2 | 48.88 (18.82) | 24.41 (14.43) | 73.23 (18.98) | 33.20 (7.89) | 40.99 (10.84) | 6.78 (1.13) | 53.77 (5.75) | 76.47 (21.98) |

| Session 3 | 54.88 (25.90) | 21.48 (21.63) | 62.28 (16.09) | 29.56 (8.43) | 35.35 (11.56) | 6.56 (1.11) | 55.39 (4.54) | 95.53 (32.27) |

| Session 4 | 49.87 (23.70) | 14.92 (18.72) | 52.84 (20.33) | 28.30 (9.07) | 34.98 (10.79) | 6.81 (1.20) | 54.84 (5.22) | 114.00 (41.16) |

| Session 5 | 47.44 (32.30) | 18.18 (16.59) | 53.91 (18.69) | 25.73 (7.53) | 29.97 (8.90) | 7.00 (1.32) | 55.03 (7.31) | 125.97 (41.77) |

| Session 6 | 52.52 (37.26) | 18.14 (29.27) | 52.36 (17.75) | 25.65 (7.23) | 29.55 (9.32) | 7.09 (1.00) | 54.29 (8.06) | 129.78 (42.67) |

| Session 7 | 57.76 (56.34) | 23.11 (26.71) | 45.53 (18.59) | 26.04 (8.62) | 28.21 (9.83) | 7.34 (1.04) | 53.61 (9.05) | 139.88 (48.66) |

Note. For ANT and Trail, lower time means better performance. For Block span, N-back, and Digit Symbol Substitution, higher counts better performance.

| S2 | Alerting | Orienting | Conflict | Trail A | Trail B | Corsi Block | N Back | DSS |

|---|---|---|---|---|---|---|---|---|

| Session 2 | 0.42* | 0.29 | 0.17 | -0.14 | -0.18 | 0.17 | 0.02 | -0.21 |

| Session 7 | 0.61** | 0.51** | 0.27 | 0.13 | 0.21 | 0.00 | -0.07 | -0.17 |

*p<.05, **p<.01

Note. Lower-left triangle Session 2; Upper-right triangle Session 7. On the gray-shaded diagonal the correlation for each task between first and last sessions.

DISCUSSION

The leading motivation for the present study has been the claim that in the common evaluation of individual differences in cognitive abilities is mostly based on single sessions and practice on tasks has been limited or not included in the current use of cognitive test batteries. We argue that in addition to representing its targeted cognitive ability, task format features and instructions include many elements which influence performance levels and may be irrelevant to the evaluation of targeted cognitive ability (e.g., working memory, processing speed, inhibition, task switching). Practice would serve as a common experience on a task to all its performers and may reveal the initial and later effects of such components. It may also reduce the effects of the first session on performance of specific elements arising from differences in past histories and experience. Significant individual differences in the first session performance levels were obtained for all tasks and measures in the three experiments, supporting their use to differentiate between individuals in their embedded cognitive abilities. However, practice had differential effects on tasks and their performance. Three types of practice effects were observed: No significant effects on individual performance levels; Significant and equal gains for different first meeting performance levels; Interactive effects between practice gains and first session different performance levels. Individual differences and differential practice effects were revealed not only for global performance on a task but also for specific components within it. These effects are considered in reference to the format of a given task, its nature and attention demand.

The alternating screen Breakfast Task was presented as an integrated single mission of coping with multitasking demands, but the effects of practice on its three performance measures differed. On table setting voluntary investment measure, performance improved with equal gain for performers with different initial score levels. In cooking coping with imposed load, accuracy (DIS) performance did not improve with practice, whereas the executive control serving synchrony measure (RST) had a negative slope with practice and significant negative correlation between individual slopes and intercepts indicates that performers with poorer initial performance gained more from practice than higher initial performance subjects. These differences reflect also the distinction between the three measures proposed by Rose et. al. (2015) and Gopher et. al. (2022), showing that in a single complex task, individual differences in basic performance abilities followed the nature, structure and demand of specific task segments rather than those of the global task as an integrative entity. They reinstate the question of identifying in tasks the segments and the relevant component of the enclosed cognitive abilities.

Rose et al. (2015) study which did not include practice distinguished between the local monitoring nature of the food accuracy measure and the two executive control performance measures of food serving synchrony (RST) and table setting (TS). Gopher et. al. (2022) further distinguished between executive control of voluntary investment and coping with imposed load. Five sessions of practice did not have a significant effect on cooking accuracy performance levels but were significant and differentially affected by the two types of executive control performance measures. When cooking and balancing accuracy and synchronized serving measures, performers with initial lower synchronization levels benefited more from practice, while similar difference was not obtained in table setting voluntary investment.

In the six-screen version of the Breakfast Task despite the global much higher load and elevated attention management requirements, the same distinction between the three measures of practice effects individual differences. The higher load and attention demand of the six screens affected cooking performance (Table 1 and 2). Both cooking accuracy (DIS) and synchronous serving (RST) error averages were significantly increased, with the most pronounced being the impairment of RST performance. Table setting (TS) performance was not impaired in the six-screen condition, with no meaningful change from the alternating screens experiment. These findings are consistent with previous studies (Craik & Bialystok (2006) (Gopher et. al. 2022). Table settings distinct voluntary and static nature enabled subjects to develop and apply proper strategies to cope with the increasing attention management and performance requirements.

The five tasks employed in cognitive battery differ in their targeted dimension of cognitive ability assessed. The effects of practice on the tasks were also not uniform. In all tasks individual differences, among initial performance levels (intercepts), practice effects (slopes) or both, were a highly relevant component of analysis. What is the information added by the differential effects of practice? We first examine the differential practice effects on the performance of the ANT task which was theoretically constructed to separate and compare the behavioral operation of exogenous and endogenous attention systems (Posner and Rothbart, 2007). Our results show that they differ not only in reaction time costs during the first session but also in their practice effects (Table 4). In previous studies the exogenous attention system has been argued to capture attention and operate automatically. It has been shown to be fast but transient, whereas the operation of the endogenous attention system has been reported to be slower, more controlled and effortful (Bonder, & Gopher, 2019; Carrasco 2011; Kurtz et al., 2017; Kahneman 2011). Consistent with the distinction between the two attention systems, no practice effects on performance were obtained for the exogenous attention alert and orient cost measures, while a significant reduction of reaction time costs with practice were obtained for the endogenous attention conflict resolution measure, which is called upon to resolve the mismatch between the pointing direction of central and side presented arrows. The differential practice effects on the ANT task measures substantiate not only its proposed distinction between the operation modes of the two attention systems but also demonstrates that separate practice effects can be obtained for different components within a task.

The format and features of ANT task were intentionally developed to separate the cost and operation modes of attention systems but may also provide a conceptual framework for considering the differential practice effects observed in the performance of the Breakfast Task and the tasks included in the cognitive battery. In the Breakfast Task the cooking accuracy measure (DIS) is based on monitoring cooking time, in which performers monitor the time driven size changes in the five bar-graphs of food items (Figure 1). These changes are automatically captured by the exogenous attention system (see also Rose, 2015). The synchronized food serving and table setting performance measures both require endogenous attention and executive control involvement. In the two Breakfast Task experiments no practice effects were obtained for the cooking accuracy performance measure (DIS), while significant practice effects were obtained for both the cooking serving synchrony (RST) and table setting (TS). In both the ANT and the Breakfast tasks, practice effects were obtained for the within task components demanding endogenous attention operations and no effects for components automatically captured by exogenous attention. In comparison with ANT, the Breakfast Task was developed as a global indicator of attention management and multitask ability and is a much more complex, continuous and multi-element task. Nevertheless, in both tasks, practice effects depended on the nature and attention demands of the specific task components rather than those targeted by the global task constructed. This conclusion is also strengthened by the results of the second experiment with Breakfast Task, in which the same differential practice effects were maintained although the overall difficulty and attention management demands of the global task were much increased in the second experiment.

If practice effects on task performance underline the initial difficulties and differences between performers, it should be recognized that although significant individual differences were obtained in the first session performance for all three measures in each of the two tasks, practice effects were obtained only for those associated with endogenous attention, top-down operations. One important difference between the tasks that should be noted is that in the ANT task the costs of conflict resolution were significantly reduced with practice, but there was no interaction of practice with individuals first session performance levels. Similar results were obtained for table setting performance in the Breakfast Task. However, for the cooking synchronized food serving measure there was a significant negative interaction such that performers with lower performance levels in the first meeting gained more from practice than those with higher initial performance levels. Can the difference be associated with the structure and format of the task?

The ANT task depends on responses to discrete and short duration presentations and does not include a working memory component. The Breakfast Task is comprised of multiple segments and both table setting and cooking include working memory and attention management demands. However, and like the ANT, table setting is under voluntary control static and discrete while cooking is a continuous and time dependent segment. While cooking, cooking duration of foods and their end points are both monitored by automatically following relative changes in the five food barographs. In each cooking round of the Breakfast Task, the food serving synchrony measure in each round represents a subjective solution for a possible conflict between cooking accuracy and joint serving errors. The instructions emphasized their equal importance for scoring cooking performance. Once cooking has started, executive control directs a subjective decision for the relative weight of food accuracy and joint serving errors. This balancing and conflict resolution element in cooking is like arrows direction in the ANT task and does not exist in the table setting segment. The observed interaction may be an example for significant individual differences on multitask performance observed during the first meeting of cooking, but cross change with practice. Further research is needed to decide whether the observed difference reflects the targeted evaluation of multitask ability or is associated with other irrelevant factors.

The existence and impact of conflict resolution between two items captured by exogenous attention can be examined in two additional tasks which revealed significant interaction between practice and first meeting performance levels, the working memory N-back task and Trail-B, which called for ordered switching between digits and letters in creating trails between all items presented on the screen. In both tasks a significant interaction was found between the first session’s performance levels and the practice slope. In both cases practice was more beneficial for lower than higher level performers. In the N-back working memory task, a presented sequence of single digits or letters is attended by exogenous attention and executive control is called upon to compare the identity of an N-back item presently displayed. In the Trail B task version, all relevant letters and digits are displayed on the screen and present in each step. Performers are asked for an ordered switch between digits and letters when they create a trail. In this task, attention control is called upon to choose the correct next step from the exogenous captured digits and letters. Thus, like the ANT cooking, top-down attention control operations are called upon to resolve conflicts created by automatically attended response alternatives.

Common to the three tasks is the call for endogenous executive control to resolve a conflict between two exogenous attention captured signals. The three tasks differ from the significant practice effects without interaction obtained in the performance of the ANT task. However, while the ANT task is discrete, based on response to single trials and have limited working memory demands, the Breakfast, N Back and Trails B tasks, each includes a heavy working memory component, continuous and time dependent or based on sequences on multiple presentations for each response. It is instructive that efficient employment of executive control in this later case create initial differences in performance levels which interact with practice.

The influence of the requirement to resolve exogenous attention created conflict can be further evaluated by the examination of practice effects on other tasks in the present study which include both working memory demands and multiple items presentation per response, but not exogenous attention conflict. Table setting in the Breakfast Task, Trails A task version, Corsi Block Tapping and the Digit Symbol Test, are all tasks which include a working memory and multiple items but not a created conflict. In all of them, individual differences and practice effects were significant but did not interact with first meeting performance levels. It appears that the need to resolve a conflict added an additional factor to the performed task, a requirement which increased its executive control difficulty, created differences in the first session performance levels and led to the cross interaction between the practice slope and first session intercept.

It is also interesting to examine the obtained practice effects in the context of the compensation and magnification training effect models that were described in the introduction. To recap, compensation models argued for larger training gains for initial lower performers (Karbach et.al, 2017), while the magnification model reported higher comparative training gains in performers with initial high-performance levels (Foster et. al. 2017). In the context of the present study, we can examine the experimental tasks employed by the two models. In Karbach’s compensation experiments, the focus was on training mixed multiple switching between two tasks to improve executive control. For example, a figure is presented in which one task was to decide whether it is fruit or vegetable and the second task to judge its size as large or small. In another experiment of displayed figures, subjects had to switch between judging planes/cars or single/pairs of objects presented. Subjects had to switch every two trials. The visual elements in each figure were automatically attended by exogenous attention and the conflict to resolve was which of the two categories was relevant. Thus, the tasks used by the proposed compensation model are very similar to the Trail B – digit and letter task, in which practice gains were larger for initial lower performers.

The magnification effects in the second model study reported the effects of training on working memory span, by teaching and practicing WM strategies such as mnemonics. A good example is the study by Foster et. al. (2017). In their running span WM training, subjects were instructed to remember the last X presented letters with the X number increasing in each level of difficulty. Single letters were then briefly flashed on the screen. The number of letters seen varied with each trial but was never fewer than the subjects were told to recall. It is an increasing difficulty WM task, but no conflict to resolve. In this condition, teaching a memory strategy benefits those with better initial WM performance. In its format, this task is like the Corsi Block Tapping task. In our experiments we did not observe a practice magnification effect, but we did not conduct specific training to improve WM span. In all tasks in which working memory was called upon without a conflict to resolve, there were significant practice effects which did not distinguish between performers based on initial performance levels. Thus, in our experiments when practice effects are related to the nature of executive control required involvement and type of attention demands appear to be consistent with the results of the tasks used by the compensation and magnification training models.

CONCLUSIONS

The main findings and points of the three experiments can be summarized as follows: Practice effects were not uniform and reflected the nature and processing demands of different tasks as well as components within task. These differences cannot be observed and evaluated in the results of first session’s performance. The differential effects on components within tasks imply that not a single but separate scores should be given to individual performance levels in many cognitive tasks.

For the variety and nature of the cognitive tasks that were employed in the present study three major processing requirements were identified to characterize the nature and demand of task components: nature of attention demands, conflicting or switching between inputs and high working memory requirement.

LIMITS AND FUTURE RESEARCH

There are limits and questions to the results and theoretical perspective proposed by the present study to be followed in future research.

- Increased sample size: Although the focus of this study was on practice effects and the results of three experiments were consistent and significant, larger sample sizes than the 30 participants in each experiment, is commonly employed when the focus is the measurement of individual differences. This limit calls for a replication of the study with a larger number of participants.

- Contribution of task components and format: a major outcome to be supported in continuing research is that task format and its main component requirements should be separately evaluated when measuring individual differences in cognitive abilities. The present tasks and their format should be considered as one example. The nature and dimensions of possible contributing components and their effect should be further investigated and justified. This strong claim has major implications for research and application and needs to be tested and examined in future research.

- Linkage to existing models: What is the relationship and linkage between the approach and results of the present study to the existing factor analysis and latent structure task grouping approaches?

- Coping with imposed load versus voluntary investment efforts: Gopher et. al (2022), demonstrated the importance of this distinction when coping with load and multitask demands. The Breakfast Task table setting was the only performance measure in which performers had the complete freedom to decide when and how long to invest in performing this task segment. We believe that when coping with load the degrees of freedom for performers in strategy development is highly important but a neglected cognitive dimension, in which individual differences should be studied.

REFERENCES

- Oren, C., Kennet-Cohen, T., Turvall, E. & Allalouf, A. (2014). Demonstrating the validity of three general scores of PET in predicting higher education achievement in Israel. Psicothema, 2014, 26, 117-126.

- Kleper, D. & Seka, N. (2017). General Ability or Distinct Scholastic Aptitudes? A Multidimensional Validity Analysis of a Psychometric Higher-Education Entrance Test. Journal of Applied Measurement. http://jampress.org/abst.htm

- Gopher, D. (1982). A selective attention test as a predictor of success in flight training, Human Factors 24, 173-183.

- Charles, B. K., & Florah, O. M. (2021). A Critical Review of Literature on Employment Selection Tests. Journal of Human Resource and Sustainability Studies, 9, 451-469. https://doi.org/10.4236/jhrss.2021.93029

- Friedman, N. & Miyake, A. (2017). Unity and diversity of executive functions: Individual differences as a window on cognitive structure. Cortex 86, 186-204.

- Karbach, J., Könen, T., & Spengler, M. (2017). Who benefits the most? Individual differences in the transfer of executive control training across the lifespan. Journal of Cognitive Enhancement, 1, 394-405. https://doi.org/10.1007/s41465-017-0054-z

- Salthouse, T. A., & Ferrer-Caja, E. (2003). What needs to be explained to account for age-related effects on multiple cognitive variables? Psychology and Aging, 18, 91-110.

- Eich S.T, MacKay-Brandt A, Stern Y, Gopher D (2016). Age-Based Differences in Task Switching Are Moderated by Executive Control Demands. J Gerontology B: Psychol Sci Soc Sci.

- Bialystok, E., Craik, F. I., & Stefurak, T. (2008). Planning and task management in Parkinson’s disease: differential emphasis in dual-task performance. Journal of the International Neuropsychological Society, 14(2), 257-265.

- Tanguay, A. N., Davidson, P. S. R., Nuñez, K. V. G., & Ferland, M. B. (2014). Cooking breakfast after a brain injury. Frontiers in Behavioral Neuroscience, 8, 272.

- Folstein MF, Folstein SE, McHugh PR. (1975). Mini-Mental Stat: a practical method for grading the cognitive state of patients for the clinician. J Psychiatry Res.; 12:189-198.

- Lucas J, Ivnik R, Smith G, et al. 1998; Mayo’s older Americans normative studies: category fluency norms. J Clin Exp Neuropsychol. 20:194-200.

- Salthouse. T. A. Relations Between Cognitive Abilities and Measures of Executive Functioning. (2005) Neuropsychology, 19, 4, 532-545.

- Rose, N. S., Luo, L., Bialystok, E., Hering, A., Lau, K., & Craik, F. I. M. (2015). Cognitive processes in the Breakfast Task: Planning and monitoring. Canadian Journal of Experimental Psychology / Revue canadienne de psychologie expérimentale, 69(3), 252-263. https://doi.org/10.1037/cep0000054

- Kabat, M. H., Kane, H.M., Jefferson, L.R., A. L. & DiPino, R. K (2001). Construct Validity of Selected Automated Neuropsychological Assessment Metrics (ANAM) Battery Measures. The Clinical Neuropsychologist Volume 15, 498-507.

- Miyake, A., Friedman, N. P., Emerson, M. J., Witzki, A. H., Howerter, A., & Wager, T. D. (2000). The unity and diversity of executive functions and their contributions to complex frontal lobe tasks: A latent variable analysis. Cognitive Psychology, 41, 49-100.

- Ackerman, P. L., & Cianciolo, A. T. (2000). Cognitive, perceptual speed, and psychomotor determinants of individual differences in skill acquisition. Journal of Experimental Psychology: Applied, 6, 259-290.

- Salthouse, T. A. (2004). Localizing age-related individual differences in a hierarchical structure. Intelligence, 32, 541-561.

- Miyake, A., & Friedman, N. P. (2012). The nature and organization of individual differences in executive functions: four general conclusions. Current Directions in Psychological Science, 21, 8-14.

- Frederiksen, J.R. & White, B.Y. (1989). An Approach to Training Based Upon Principal-Task Decomposition, Acta Psychologica, 71, 89-146.

- Gopher, D., Weil, M., & Siegel, D. (1989). “Practice under changing priorities: An approach to training of complex skills”. Acta Psychologica, 71, 147-179.

- Zinke, K., Zeintl, M., Eschen, A., Herzog, C., & Kliegel, M. (2012). Potentials and limits of plasticity induced by working memory training in old-old age. Gerontology, 58, 79-87.

- Zinke, K., Zeintl, M., Rose, N. S., Putzmann, J., Pydde, A., & Kliegel, M. (2014). Working memory training and transfer in older adults: effects of age, baseline performance, and training gains. Developmental Psychology, 50, 304-315.

- Karbach, J., & Kray, J. (2009). How useful is executive control training? Age differences in near and far transfer of task-switching training. Developmental Science, 12, 978-990.

- Bherer, L., Kramer, A. F., Peterson, M. S., Colcombe, S., Erickson, K., & Becic, E. (2008). Transfer effects in task-set cost and dual-task cost after dual-task training in older and younger adults: further evidence for cognitive plasticity in attentional control in late adulthood. Experimental Aging Research, 34, 188-219.

- Cepeda, N. J., Kramer, A. F., & Gonzalez de Sather, J. (2001). Changes in executive control across the life span: examination of task-switching performance. Developmental Psychology, 37, 715-730.

- Konen, T. & Karbach, J. Analyzing differences in intervention-related changes, (2021). Advances Methods and Practices in Psychological Science, 4, 1, 1-19.

- Baltes, P. B., & Kliegl, R. (1992). Further testing of limits of cognitive plasticity: negative age differences in a mnemonic skill are robust. Developmental Psychology, 28, 121-125.

- Brehmer, Y., Li, S. C., Mueller, V., von Oertzen, T. V., & Lindenberger, U. (2007). Memory plasticity across the life span: uncovering children’s latent potential. Developmental Psychology, 43, 465-477.

- Foster, J. L., Harrison, T. L., Hicks, K. L., Draheim, C., Redick, T. S., & Engle, R. W. (2017). Do the effects of working memory training depend on baseline ability level? Journal of Experimental Psychology: Learning, Memory, and Cognition, 43(11), 1677-1689. https://doi.org/10.1037/xlm0000426

- Lindenberger, U., Kliegl, R., & Baltes, P. B. (1992). Professional expertise does not eliminate age differences in imagery-based performance during adulthood. Psychology & Aging, 7, 585-593.

- Lövdén, M., Brehmer, Y., Li, S. C., & Lindenberger, U. (2012). Training-induced compensation versus magnification of individual differences in memory performance. Frontiers in Human Neuroscience, 6, 141.

- Verhaeghen, P., & Marcoen, A. (1996). On the mechanisms of plasticity in young and older adults after instruction in the method of loci: evidence for an amplification model. Psychology and Aging, 11(1), 164-178.

- Craik, F. I., & Bialystok, E. (2006). Planning and task management in older adults: cooking breakfast. Memory & Cognition, 34(6), 1236-1249.

- Posner, M. I., & Rothbart, M. K. (2007). Research on attention networks as a model for the integration of psychological science. Anu. Rev. Psychol., 58, 1-23.

- Kane, M. J., Conway, A. R., Miura, T. K., & Colflesh, G. J. (2007). Working memory, attention control, and the N-back task: a question of construct validity. Journal of Experimental psychology: learning, memory, and cognition, 33(3), 615.

- Jaeggi, S. M., Buschkuehl, M., Perrig, W.J. & Meier, B. (2010). The concurrent validity of the N-back task as a working memory measure. Journal of Experimental Psychology: Learning, Memory, and Cognition 2007, Vol. 33, No. 3, 615-62.

- Kessels, R. P., Van Zandvoort, M. J., Postma, A., Kappelle, L. J., & De Haan, E. H. (2000). The Corsi block-tapping task: standardization and normative data. Applied neuropsychology, 7(4), 252-258.

- Arbuthnot, K., & Frank, J. (2000). Trail making test, part B as a measure of executive control: validation using a set-switching paradigm. Journal of clinical and experimental neuropsychology, 22(4), 518-528.

- Baddeley Alan Working Memory: Theories, Models, and Controversies Annual Review of Psychology (2012) 63, pp. 1-29.

- Salthouse, T. A. (2011). What cognitive abilities are involved in trail-making performance? Intelligence, 39(4): 222-232.

- SÁNCHEZ-CUBILLO, J.A. PERIÁÑEZ, D. ADROVER-ROIG, J.M. RODRÍGUEZ-SÁNCHEZ, M. RÍOS-LAGO, J. TIRAPU, BARCELÓ F. (2009): Task-switching, working memory, inhibition/interference control, and visuomotor abilities. Journal of the International Neuropsychological Society. 15, 438-450. doi:10.1017/S1355617709090626.

- Hoyer, W. J. Stawski, R. S. Wasylyshyn C, Verhaeghen P. (2004): Adult Age and Digit Symbol Substitution Performance: A Meta-Analysis. Psychology and Aging Inc. 19(1), 211-214.

- Jaeger j. (2018): Digit Symbol Substitution Test. J Clin Psychopharmacol 38: 513-519.

- Gopher D, Ben-Eliezer D, Levine A (2022). Imposed load versus voluntary investment: Executive control and attention management in dual-task performance. Acta Psychologica, 227 103591. doi.org/10.1016/j.actpsy.2022.103591.

- Bonder, T., & Gopher, D. (2019). The effect of confidence rating on a primary visual task. Frontiers in Psychology, 10, 2674.

- Carrasco, M. (2011). Visual attention: The past 25 years. Vision research, 51(13), 1484-1525.

- Kurtz, P., Shapcott, K. A., Kaiser, J., Schmiedt, J. T., & Schmid, M. C. (2017). The Influence of Endogenous and Exogenous Spatial Attention on Decision Confidence. Scientific Reports, 7(1). https://doi.org/10.1038/s41598-017-06715-

- Kahneman Daniel: (2011), Thinking fast and Slow, Penguin Books.

- Inquisitr 5 [Computer software]. (2016). Retrieved from https://www.millisecond.com.

- Petersen, S. E., & Posner, M. I. (2012). The attention system of the human brain: 20 years after. Annual review of neuroscience, 35, 73-89.

- MacLeod, J. W., Lawrence, M. A., McConnell, M. M., Eskes, G. A., Klein, R. M., & Shore, D. I. (2010). Appraising the ANT: Psychometric and theoretical considerations of the Attention Network Test. Neuropsychology, 24(5), 637.

- Fan, J., McCandliss, B. D., Sommer, T., Raz, A., & Posner, M. I. (2002). Testing the efficiency and independence of attentional networks. Journal Cognitive Neuroscience, 14(3), 340-7.

- Gaudino, E. A., Geisler, M. W., & Squires, N. K. (1995). Construct validity in the Trail Making Test: what makes Part B harder? Journal of clinical and experimental neuropsychology, 17(4), 529-535.

- Wechsler, D. (1997). Wechsler Adult Intelligence Scale–Third Edition (WAIS-III).