The Nemesis Effect: AI, Complexity, and Patient Safety

The Nemesis Effect in Healthcare: Complexity, Artificial Intelligence and Patient Safety

Stavros Prineas1, MBBS BSc (Med) FRCA FANZCA AFRACMA

- Nepean and Blue Mountains Hospitals, New South Wales, Australia.

Email: [email protected]

OPEN ACCESS

PUBLISHED: 31 January 2026

CITATION: Prineas, S., 2026. The Nemesis Effect in Healthcare: Complexity, Artificial Intelligence and Patient Safety. Medical Research Archives, [online] 14(1).

COPYRIGHT: © 2026 European Society of Medicine. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

DOI: https://doi.org/10.18103/mra.v14i1.7124

ISSN 2375-1924

ABSTRACT

The Nemesis Effect occurs when a clinical intervention increases the likelihood of the adverse outcome it was intended to avoid, or has other perverse effects that vitiate its benefits. The effect arises from the natural tendency of a complex adaptive system (such as healthcare) toward increased ‘entropy’— that is, toward greater disorder — mediated through both the complex interconnectedness of its components and a relative inability of well-meaning human agents to see ‘the bigger picture’ when implementing powerful policies and technologies. To illustrate the concept, several examples are cited from modern medical practice: the rise of multidrug-resistant organisms, the unintended effects of the European Working Time Directive, and the deskilling impact of certain novel technologies in surgery and anaesthesia. The potential nemesis effects of artificial intelligence (AI) on clinical practice are then discussed, together with possible seeds of remedy through a human factors/ergonomics approach to healthcare governance and clinical training. Good clinical governance can be seen as a way of maintaining a state of ‘negative entropy’ needed to keep a ‘living’ system safe, stable and functional. This requires the ongoing expenditure of energy and effort, as well as the adoption of a broader sociotechnical perspective.

Keywords: Nemesis, sociotechnical, complexity, VUCA, artificial intelligence, deskilling, human factors, ergonomics

Introduction

Readers of Greek mythology will recall the stories of Tyche and Nemesis. Tyche, the goddess of fortune, would bestow random gifts on mortals: a struggling farmer would be blessed with a bumper harvest, a beggar a sack of gold, and so on. Nemesis was the goddess of retribution, responsible for redressing hubris, or foolish human arrogance; she would follow Tyche to make sure the beneficiaries showed appropriate respect and gratitude for the gift they had received, perhaps through a thankful prayer or sacrifice, or sharing the gift, or just through using it wisely. If they were judged to have abused or squandered Tyche’s generosity, Nemesis would curse them with an ironic fate worse than if they had never received the gift. The classical message was clear: respect the power behind the gift—or suffer perverse consequences. In part because of this perceived relationship between good fortune and hubris, the Romans often worshipped Nemesis and Tyche (whom they called Fortuna) together.

If we consider mythology to be an ancient metaphor for complex forces of nature that were beyond contemporary understanding, we can perhaps discern a deeper, more modern significance. The quantum physicist Erwin Schrödinger proposed that “life feeds on negative entropy”; by this he meant that living organisms are constantly at odds with Newton’s Second Law of Thermodynamics, which states that the universe is always randomly tending toward a state of greater disorder (entropy). To counter this, living organisms must expend energy and manipulate matter from their environment to maintain their complex, dynamic, reproductive state of ‘negative entropy’. Preserving and protecting human life is fundamental to healthcare and patient safety; but as the healthcare systems we create become more complex, the randomness inherent in that complexity plays an increasing role in influencing clinical outcomes. The mechanisms by which the universe may waylay the execution of our well-intended plans toward disorder may become less obvious to us (“The best-laid schemes o’ mice an’ men/gang aft agley/an’ lea’e us nought but grief an’ pain/for promis’d joy”). The current paper proposes a name for this phenomenon – the Nemesis Effect – with the aim of articulating its relevance to clinical practice and to propose possible remedies.

Complexity and The Nemesis Effect

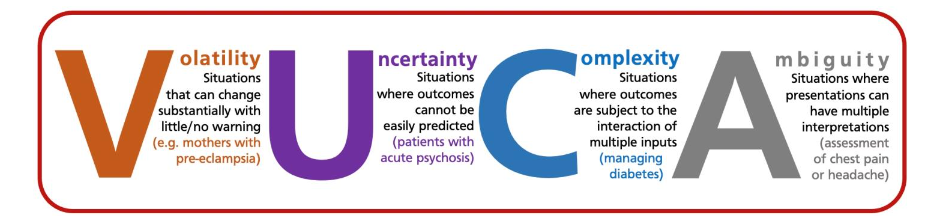

In healthcare, modern technology and training allow us to provide therapies not only of unprecedented complexity but also on scales of volume and speed that our predecessors could never have imagined. This greater capability comes at a cost. In military literature it is common to speak of modern warfare scenarios as having high levels of volatility, uncertainty, complexity and ambiguity (‘VUCA’ environments) where traditional ideas of chains of command and operational planning are less effective. This term has entered the medical literature to describe the dynamic and often-turbulent nature of modern healthcare and to look beyond an algorithmic ‘process and pathway’ model of medical management.

Another perspective on complexity is the Cynefin framework, where human systems are categorized as ‘clear’, ‘complicated’, ‘complex’ or ‘chaotic’. With increasing complexity, cause-and-effect relationships become less discernible, to the point where the pursuit of causation becomes virtually meaningless. Healthcare is a classic example of a complex adaptive system where all four levels of complexity are manifest.

A technical definition of the Nemesis Effect would therefore be as follows: In a complex adaptive (healthcare) system, any (therapeutic or patient) safety intervention applied within a narrow frame of reference, without consideration of a wider frame of reference, will tend toward some degradation in overall systemic benefit or safety, due to unseen effects of that intervention on other (invisible or unrecognised) parts of the complex adaptive system.

The Nemesis Effect presumes that everything we do in clinical practice, whether we are aware of it or not, has its nemesis: a potentially harmful retribution that comes from not being mindful of the wider ramifications of our treatments. This idea was foreshadowed decades ago by Illich and is a perverse variant of the Law of Unintended Consequences. A positive intervention within a narrow frame of reference in one part of the system will have impacts upon other parts—some desirable, others not, some foreseeable, others not (except perhaps in hindsight). Occasionally we inadvertently steer ourselves toward rather than away from adverse outcomes.

Methodology

The examples cited below were identified from the author’s wider experience of clinical practice over three decades, and from his arena of expertise (anaesthesiology); a deeper curatorial analysis of each example was performed through an online search of a combination of peer-reviewed clinical literature and archival source material such as news reports and press releases.

THE EMERGENCE OF MULTIDRUG-RESISTANT ORGANISMS

A classic example of medical nemesis is seen in the pervasive emergence of multidrug-resistant microorganisms, which is now a global healthcare crisis. Originally, the antibiotic properties of Penicillium mould were considered miraculous, resulting in large-scale production of penicillin not only for use in medicine but also in agriculture. In 1945, Fleming himself warned in his Nobel Prize speech that the combination of underdosing and overuse of antibiotics may lead to resistant strains; despite this, early reports from Japan of resistance to penicillin—about 10 years after its introduction into clinical practice—were initially greeted with skepticism in the West. Evolution is a powerful, complex adaptive force, and since the doubling time of the average bacterium is only 20 minutes, natural selection can occur far more rapidly in microorganisms than in humans. In addition, some bacteria evolved the ability to pass genetic material directly to neighboring cells via plasmids, greatly accelerating the spread of resistant strains. Recently the World Health Organization announced that antimicrobial resistance around the world was directly responsible for 1.25 million deaths in 2019 alone, with a further 40 million deaths expected between now and 2050. This phenomenon can be interpreted as a therapeutic intervention—which was well-intended within a narrow frame of reference—resulting in the very harm it was intended to treat through failure to appreciate the bigger picture.

CREUTZFELDT-JACOB DISEASE AND DISPOSABLE TONSILLECTOMY EQUIPMENT

In the late 1990s, there was significant concern in the UK about the theoretical risk of transmission of variant Creutzfeldt-Jacob disease (vCJD) through prions retained in surgical equipment for ENT surgery, as they were resistant to standard hospital sterilisation techniques. By 2001 the incidence of UK deaths directly related to vCJD was at its peak, and the condition had drawn significant public and media attention. This prompted an urgent government-driven directive to replace all reusable tonsillectomy equipment with disposable equivalents. The disposables turned out to be functionally inferior to their reusable counterparts, resulting in actual morbidity and mortality at rates significantly exceeding the theoretical risk of prion transmission and culminating in reversal of the directive.

THE ONGOING TALE OF THE EUROPEAN WORKING TIME DIRECTIVE

Fatigue and sleep deprivation are major safety issues across many high-risk industries. In healthcare it is recognised as a significant cause of impaired clinical performance, clinical errors, and increased morbidity and mortality. In 1998 the European Union introduced a European Working Time Directive (EWTD) in most industries, limiting the hours employees were required to work, mandating breaks and rest periods, etc. Healthcare workers initially were not included, but in 2006 the EWTD was extended to hospital staff, including resident doctors, capping their workweek first at 56 hours, then at 48 hours by 2009.

During the implementation phase, concerns emerged such as dramatic reductions in the number of junior doctors available to fill rosters, the time available for training, the quality of that training, the impact of shorter shifts on circadian rhythms, issues of continuity of care, handover problems, etc. As we enter the third decade of this directive, the consensus appears to be that while the EWTD has indeed been successful in reducing fatigue and improving conditions for healthcare workers, demonstrating a concrete improvement in safety overall has proved more controversial because of the unintended detrimental effects.

THE IMPACT OF LAPAROSCOPIC SURGICAL TECHNIQUES ON SKILLS IN OPEN SURGERY

Hans Christian Jacobaeus performed the first human laparoscopy in 1910, although the technique did not become popular until the 1980s. Since then, laparoscopic approaches have largely replaced traditional ‘open’ (laparotomy) approaches for many intra-abdominal procedures such as cholecystectomy and appendectomy. It is well documented that minimally invasive techniques result in better outcomes for patients with fewer complications and shorter hospital stays. An audit of 31,988 cases of emergency appendectomy in Germany showed that the proportion of cases performed laparoscopically (versus open or conversion to open) increased from 87.4% in 2010 to 97% in 2020. The authors concluded that “the laparoscopic approach has become the gold standard for all stages of appendicitis” and that “open appendectomy no longer plays a significant role when considering the entire operated group.”

The potential for nemesis lies in the second statement. Occasionally a laparoscopic approach is either not possible or it is contraindicated; the cohort may be small, but it is still significant. The surgical skill set required to perform a laparoscopic appendectomy does not fully translate to the skill set required to perform the same procedure by an open surgical approach. Training young surgeons to regard the need occasionally to perform an open appendectomy as insignificant creates a training gap which puts that cohort of patients at risk. As open techniques become less frequent, trainees experience ever-diminishing clinical exposure to such cases; there is evidence that this undermines their confidence on occasions when an open procedure is indicated. It is not clear whether their competence is also affected; nevertheless, surgical colleges around the world have acknowledged the phenomenon and have made remedial recommendations, such as creating regular opportunities for medical simulation and open surgical workshops as part of mandatory continuous medical education (CME) programmes.

ANAESTHESIA TECHNIQUES FOR CESAREAN SECTION

A corollary case can be seen in the field of anaesthesia. Over the last four decades, the use of spinal anaesthesia has become the predominant technique for lower segment Caesarean section (LSCS), as it avoids many of the complications associated with general anaesthesia (GA) in pregnancy, such as unexpected difficult intubation and intraoperative awareness. Modern guidelines recommend that GA for LSCS be avoided, and as a result, the use of GA for LSCS has declined dramatically: at one UK facility, from 76% in 1982 to 4.9% in 2006. In a Boston study, GAs accounted for 0.5-1% of LSCS deliveries from 2000-2005, and it was observed “that many residents [had] graduated without having performed a GA in an obstetric patient.”

However, in circumstances where spinal anaesthesia fails or is contraindicated, and GA cannot be avoided, a new risk emerges due to lack of experience and confidence with performing GAs in this population, especially the management of unexpected difficult intubation and of unstable patients under GA (e.g., in eclampsia/preeclampsia). The nemesis arises in promoting spinal anaesthesia as ‘safer’ without considering the necessity to plan and train for contingencies where spinal anaesthesia is not possible. To paraphrase the philosopher Emile Chartier, “Nothing is more dangerous than a plan when it’s the only one you have.”

THE LARYNGEAL MASK AIRWAY AND BASIC AIRWAY MANAGEMENT SKILLS

The same theme can be seen in the rise of the laryngeal mask airway (LMA), invented in the 1980s by Archie Brain. Even inexperienced users can achieve successful ventilation in over 95% of cases with minimal training. The device has revolutionized airway management in anaesthesia and critical care, leading to a whole new class of supraglottic airway devices (SGAs). By 2009 it was estimated that SGAs were used in 56% of all general anaesthetics given in the UK. As the use of SGAs became more mainstream, concerns were raised about the impact of over-reliance on SGAs on other airway management skills such as bag-mask ventilation and endotracheal intubation. In terms of achieving adequate alveolar ventilation, while the failure rate for the newer second-generation LMAs is generally quoted as less than 2%, in some recent cohorts it may be as high as 8%. Meanwhile, there has been a marked decline in bag-mask ventilation skills: while a 1993 study found that volunteers with no previous resuscitation experience were able to perform bag-mask ventilation in 43% of attempts after formal training, a 2025 study of trained and experienced pre-hospital personnel found only 4% of nurses and 1% of paramedics were able to meet minimum European Resuscitation Council criteria for adequate alveolar ventilation using a bag and mask.

The established superiority of supraglottic airways for first-line airway rescue in critical care settings is not in question; rather, the nemesis lies in wider contingency training, in the failure of clinical departments to maintain the perishable skill of bag-mask ventilation as a backup when a supraglottic device fails or is unavailable.

THE USE OF ULTRASOUND IN ANAESTHETIC PRACTICE

Yet another example is ultrasound-guided regional anaesthesia (UGRA) and vascular access (UGVA). Historically, skill in targeting deep anatomical structures was acquired over years of scholarship, practice and trial and error, yet still resulted in highly variable proficiency except in the hands of the very dedicated expert. The use of ultrasound has led to both increased success rates and lower complication rates, an unquestionable advance in safety and broad-based efficacy. However, it is very common to see trainees use the ultrasound device in a ‘plug-and-play’ manner, focusing on a target like a video game or Google Maps; the finer aspects of anatomical landmarks, relations, or needle trajectory become relatively peripheral to the immediate goal. Much of ‘the knowledge’—the intimate depiction of three-dimensional anatomy—is captured and rendered by the machine but is largely lost on the human preoccupied with finding ‘the camel hump’ or ‘the trident’ or other iconic memes of anatomical targets. As a result, ‘mastering’ a regional technique requires that the human become a ‘slave’ to the technology if they wish to perform the procedure safely. If for some reason the ultrasound machine were to be unavailable, inoperative, or its resolution poor, the deskilled human operator has no ’Plan B’. This nemesis can be thought of as one of Bainbridge’s ‘ironies of automation’.

This example of nemesis is especially interesting because ultrasonic imaging is a marvellous technology that creates a rich seam of anatomical information available for the practitioner to acquire, were they to choose to take the extra time to assess the surface landmarks and angle of attack before proceeding, then to check their assessment (and thereby calibrate future assessments) with ultrasound, and explore the local anatomy to further refine and internalise the knowledge. Detailed real-time technical feedback would not only make the procedure safer, it would also sharpen the clinical acumen of the human over weeks or months in a way their predecessors would have taken years or decades to achieve. Yet there has been surprisingly little literature on the utilization of ultrasound to enhance or accelerate the acquisition of traditional landmark skills.

THE INTEGRATION OF ARTIFICIAL INTELLIGENCE INTO HEALTHCARE—THE POTENTIAL FOR NEMESIS

Artificial intelligence (AI) can be defined as ‘a non-human program or model that can solve sophisticated tasks’. Informatic precursors of AI in specific pockets of healthcare have been around for decades, but over the last five years there has been a burgeoning in its use across many aspects of clinical practice, from assisted history taking and scribing to the use of expert diagnostic systems and ‘smart’ medical devices to augmented reality imaging and simulation. In 2025, most peer-reviewed publications on the use of AI in medicine relate to the fields of oncology, radiology, and pathology.

In the field of anaesthesia, recent reviews have suggested that AI can improve many aspects of pre-, intra-, and post-anaesthetic care including pre-anaesthetic risk stratification, airway assessment, real-time prediction of intraoperative complications such as hypotension, modulating anaesthesia drug delivery and closed-system TIVA, prediction of postoperative delirium, acute kidney injury, and 30-day mortality, as well as improving theatre logistics such as optimizing theatre scheduling and reducing cancellations. Real-time AI-driven virtual enhancement of anatomical images under ultrasound is set to revolutionise UGRA. All promising and welcome improvements; the wholesale integration of AI into healthcare, however, faces several serious barriers and pitfalls.

Firstly, AI requires large, robust, and standardised datasets in order for its conclusions to be valid and correct; failure to provide this leads to GIGO (‘Garbage In Garbage Out’) and AI hallucinations. A recent group of researchers concluded, “LLM-based chatbots applied to the subspecialty of regional anesthesiology are not ready to truly assist in basic or complex clinical scenarios”. Next, if the data sources are not sufficiently diverse, AI reasoning may have at best only very narrow applicability. For example, a large-scale study of a machine learning tool used to predict postoperative nausea and vomiting (PONV) excluded pediatric patients and patients undergoing regional or outpatient procedures, and utilized data from a single institution only, limiting the ability to draw more general conclusions from an otherwise impressive body of work. At worst, insufficiently diverse data sources can result in AI outputs that are inherently biased.

There is the ‘Black Box Problem’, where the complex reasoning behind the conclusions drawn by AI tools is often not transparent or explainable, causing clinicians to mistrust the advice even when it turns out to be correct. There are significant privacy and security issues, as healthcare databases are prime targets for hackers and other malevolent agents. There are ethical and legal issues around who owns the AI information, who pays for it, who can access it, and who would be responsible when clinicians act on faulty AI advice. Work remains to be done on how to validate and regulate the use of AI in healthcare globally. Finally, despite the massive amount of peer-reviewed literature on AI in anaesthesia, there remains currently very little evidence from large-scale studies that the use of AI improves perioperative clinical outcomes.

All the above issues are challenging, but at least they have been identified; ongoing systematic efforts are currently being made to address (or at least discuss) them. Meanwhile, a deeper nemesis lies not just in the degradation of physical or procedural skills as described in the examples cited earlier in this article, but in the impact of AI on the process of human cognition itself. Expertise in clinical reasoning not only requires exposure to experiences, it requires active reflection and processing of those experiences into meaningful lessons learned for future performance (‘deliberate practice’). Unless we proactively compensate for it, the loss of opportunities for human cognitive processing of clinical experiences—in the myriad of everyday small-scale interactions and conversations that would otherwise occur without smart technology—may result in random but progressive degradations in the quality of human clinical decision-making. Collectively, we may even lose the ability to see the bigger picture of how our systems work—that ‘understanding’ having become resident in the devices that would increasingly do the ‘thinking’, the ‘connecting’, and the ‘learning’ for us. There is some emergent work suggesting a clear negative correlation between ‘cognitive offloading’ (the use of external tools such as AI reduce cognitive load) and critical thinking skills in a diverse sample of UK residents.

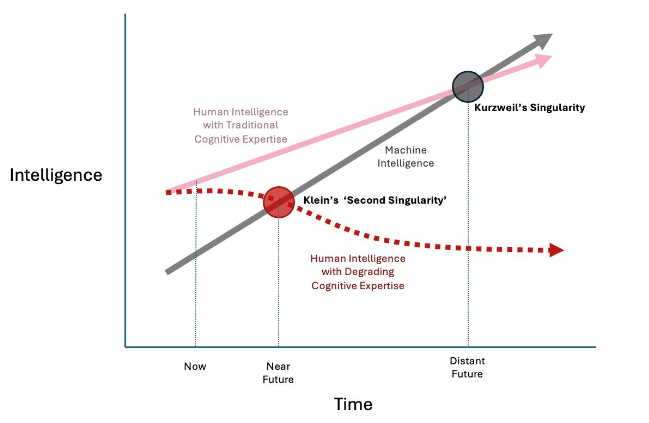

There has been a long-standing concern among many members of the scientific community—popularized by Kurzweil and now an established meme in speculative fiction—of ‘The Singularity,’ where a super-intelligent AI achieves self-awareness and dominates humanity. While contemporary experience with AI suggests that Kurzweil’s Singularity is more a problem for the distant future, Klein has warned of a more imminent ‘Second Singularity’ where humans are compelled to submit to ‘somewhat-less-than-sentient’ AI not because it has become super-intelligent, but because its pervasive and injudicious use has led to a large-scale degradation of human cognitive expertise.

Managing Nemesis—Adopting a Sociotechnical Perspective

All the examples above challenge us to reexamine the relationship between humans and technology in complex adaptive systems such as healthcare. The ultimate irony is that as the practice of medicine—with technology—objectively becomes more effective and safer, the human medical practitioners—without technology—are at risk of becoming less effective and less safe. Since all human systems must, by definition, contain humans, if the human component were to become fundamentally unreliable over time, ultimately the entire system would become at risk of collapse.

To meet this challenge systematically, some basic assumptions are proposed:

- The universe is always bigger than your brain. As Hamlet said, “There is more in heaven and earth… than is dreamt of in your philosophy.” Presume that there is always more to be discovered in the universe beyond not only our personal and collective knowledge, but beyond the technology we use to extend into it.

- As systems become more complex, so do the risks. As our ability to manage hazards and risks becomes more sophisticated, newer, more subtle problems emerge. One example is the phenomenon of ‘risk homeostasis’, also known as the Peltzman Effect: when we feel comfortable that we have created a safer environment, we push the boundaries of our capability further, taking greater risks; over time this can offset the original gains made by our safety initiatives. The concept is well understood in the domain of road safety—people tend to drive faster when they are wearing a seat belt and closer to the car in front if they know their car is fitted with anti-lock braking systems. This idea is not alien to patient safety although hard data are lacking. As an anecdotal example, using an AI scribe may allow the practitioner to spend more time observing and listening to the patient, but the clinician must still spend time after the consultation checking and editing the AI transcript. The need for extra time may not be recognised by the organization, which sees only the time spent with the patient, and may presume that the ‘time saved’ can be put toward seeing more patients. This pressures the practitioner to accept the AI summary without careful checking, which may lead to clinical errors.

- Safety is relative. if we accept the most popular clinical definition of safety as “a state in which risk has been reduced to an acceptable level” then one must infer that safety is always relative, never absolute. This is especially true in modern critical care environments such as emergency rooms, operating theaters, and intensive care units, where most, if not all, therapeutic decisions entail some form of physiological or psychological trespass, and therefore the invitation to trade some harm for some greater benefit. ‘Safety’ is not an intrinsic property of a device, a drug, a team, or a hospital, but rather an emergent—and perishable—product of all the active and conscious efforts to avoid, trap, and mitigate known and foreseeable risks at any given time. In an unfavorable set of circumstances, any drug can be a poison, any medical instrument a weapon, any healthcare worker an unwitting killer; even a decision to do nothing can be deemed to be harmful, e.g., when one has a duty of care to an acutely deteriorating patient. In VUCA environments, the traditional aphorism of primum non nocere — ‘first do no harm’ — is noble but no longer as applicable, because ‘low risk’ is not the same as ‘no risk’: primum minime nocere may be closer to the mark.

- There is always a gap between ‘work as imagined’ and ‘work as done.’ Despite their best intentions, designers of systems, equipment and work environments often conceptualise workflow in broad algorithmic terms, to create a ‘logical’ procedural map of how things should be done. Real-life work (especially in healthcare) is messier: VUCA environments can be busy or chaotic; equipment is not always available, fully functional, or fit for purpose; human operators may be tired, stressed, or distracted. Work still gets done but through workarounds, compromises, tradeoffs etc. i.e. through creative human initiative. Better system design requires iterative reconciliation of ‘top-down’ conceptualization (‘Work-As-Imagined’) with ‘bottom-up’ frontline data-driven analysis (‘Work-As-Done’). This idea was first proposed by Hollnagel and Woods and has become a fundamental principle of Resilience Engineering in healthcare, which seeks to learn not just from how we get things ‘wrong’ (e.g. adverse event analysis) but how we manage to get things ‘right’ against the odds.

- Everything is sociotechnical. The sociotechnical nature of healthcare systems was best described by Effken: “Today’s healthcare systems are highly technical systems… But healthcare systems are also social systems that have a complicated interrelationship with technology; that is, they are inherently complex sociotechnical systems. In complex sociotechnical systems, behavior is not centered in individual actors or even in groups of actors but is distributed among actors and the information available in the environment. For example, a clinic or an emergency department is a network of professionals that utilizes various information sources to deliver care while at the same time meeting teaching, research, and financial objectives. This sociotechnical system is an indivisible whole that cannot be partitioned into social aspects for social scientists and technical aspects for information technologists.”

- Technology is neither good nor bad. Powerful technologies can significantly enhance or degrade human capabilities, depending on how they are used.

Counter-Nemesis Principles

Given that human consciousness, even collectively and assisted by technology, cannot fully fathom the vastness of the universe, there will always be the potential for nemesis in any complex human endeavour. Having said this, it should be possible to improve our ability to identify nemesis and mitigate its effects.

Traditional ideas such as building buffering (e.g. having stocks in reserve), redundancy (e.g. having back-up equipment) and contingency planning (e.g. ‘what if’/’Plan B’ scenarios) into clinical pathways are established methods by which clinicians can anticipate surges in clinical activity, equipment failures, staff illness etc. They also offer limited capacity to absorb less foreseeable events.

The ability to detect and respond to unexpected changes in patient/environmental conditions in an effective and timely way requires investment in monitoring and early warning systems. VUCA environments require more regular, specific and dynamic communication between healthcare workers, teams and agencies to enhance shared situation awareness. The frequency of communication is varied in proportion to rate at which events are evolving. This is a key element of McChrystal’s ‘Team of Teams’ approach, developed originally by the US military to deal with the VUCA nature of counter-terrorism campaigns, but which has strong parallels with the challenges of managing 21st century healthcare, especially during the COVID -19 pandemic and in its aftermath.

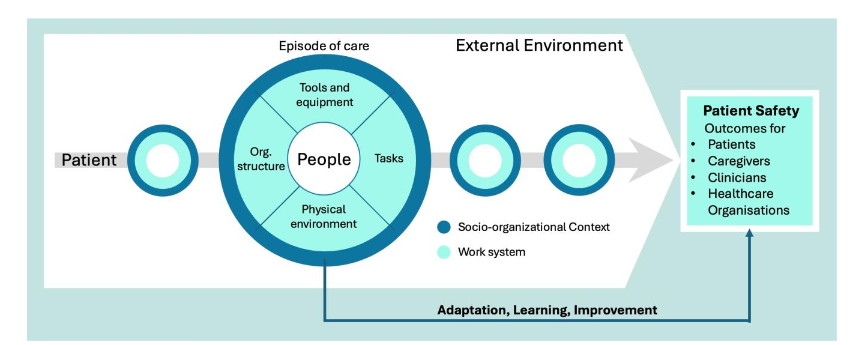

The discipline of human factors/ergonomics (HF/E) has a key role to play in managing clinical complexity. In a complex sociotechnical system, everything is connected. Changes in one part of the system will reverberate through other parts, often through channels that are not always obvious: for example, what and how technology is employed will influence human interactions, and vice versa. The System Engineering Initiative for Patient Safety (SEIPS) model, currently in its third iteration, is an HF/E initiative that takes a holistic approach to healthcare governance through assessment of a range of factors that shape individual and systemic performance and direct care: not just the human practitioners (working as individuals and in teams), but also analysis of clinical and administrative tasks, organizational structures and culture, available technologies, and internal and external (work) environments. SEIPS-based governance and performance analysis seeks to identify the interdependencies between these elements. This approach is in theory more likely to identify potential nemeses in healthcare processes; more studies are required.

Conclusion

The Nemesis Effect is more than the idea that undesired outcomes are merely the result of side effects or unintended consequences. It refers to the natural tendency of a complex adaptive system toward increased entropy—toward degradation, disorder, and a state of lesser safety —mediated through the interconnectedness of its components and a relative inability of human agents to see ‘the bigger picture’ when using powerful technologies. In a ‘living’ complex adaptive system, good governance can be thought of as a means to maintain a state of ‘negative entropy’; this requires the active and ongoing input of energy, resources, and a broader sociotechnical perspective.

Embracing Nemesis should not lead to cynicism (“Oh well, stuff happens”) but rather should serve as a call to action. In the same way that admitting our fallibility may make us better clinicians, it is hoped that accepting the potential for harm in everything we do will challenge us to examine our gifts, to beware the hubris of ‘magic bullet’ cures, and to ask what makes for a ‘healthier’ and more resilient healthcare system.

References

1 Edwards CM (1990). Tyche at Corinth. Hesperia (J Am Sch Classical Studies Ath) 59(3):529-42

2 Schrodinger E. (1944) What is Life? The Physical Aspect of the Living Cell. Available at https://archive.org/details/WhatIsLife-EdwardSchrodinger/page/n1/mode/2up accessed 20 July 2025

3 Barber HF. (1992) Developing Strategic Leadership: The US Army War College Experience. J Management Dev 11(6):4-12 doi:10.1108/02621719210018208

4 Pandit M. (2021) Critical factors for successful management of VUCA times. BMJ Leader 5:121-123

5 Cernega A, Nicolescu DN, Imre MM, Totan AR, Arsene AL, Serban RS, Perpelea A-C, Nedea M-I, Pituru S-M. (2024) Volatility, Uncertainty, Complexity, and Ambiguity (VUCA) in Healthcare. Healthcare (Basel) 12(7):773. doi:10.3390/healthcare12070773

6 Kurtz CF, Snowden DJ. (2003) The new dynamics of strategy: Sense-making in a complex and complicated world. IBM Systems J 42(3):462-83.

7 Snowden D, Rancati A. (2021) Managing Complexity (and Chaos) in Times of Crisis: A Field Guide for Decision Makers Inspired by the Cynefin Framework. Publications Office of the European Union Pubs. Available at https://publications.jrc.ec.europa.eu/repository/handle/JRC123629 (accessed 1 Dec 2025)

8 Braithwaite J, Clay-Williams R et al. (2013) Health Care as a Complex Adaptive System. In: Resilient Health Care (Ashgate Pubs., Farnham UK). 57-73

9 Illich, I (1976). Limits to Medicine – Medical Nemesis: The Expropriation of Health. Marion Boyars Pub. London. passim

10 Fleming A (1945). Penicillin (Nobel Lecture, December 11, 1945). Available at https://www.nobelprize.org/uploads/2018/06/fleming-lecture.pdf accessed 20 Jul 2025

11 Davies J, Davies D. (2010). Origins and Evolution of Antibiotic Resistance. Microbiol Mol Biol Rev 74(3):417-33. doi: 10.1128/MMBR.00016-10

12 World Health Organisation (2023) Antimicrobial Resistance FactSheet. Available at https://www.who.int/news-room/fact-sheets/detail/antimicrobial-resistance accessed 20 Jul 2025

13 Naghavi, M et al. (2024) Global burden of bacterial antimicrobial resistance 1990–2021: a systematic analysis with forecasts to 2050. Lancet 404(10459): 1199-1226

14 Frosh A. (1999). Prions and the ENT surgeon. J Laryngol Otol 113:1064-7.

15 Frosh A, Joyce R, Johnson A. (2001) Iatrogenic vCJD from surgical instruments. BMJ 322(7302):1558–1559. doi: 10.1136/bmj.322.7302.1558

16 Re-introduction of reusable instruments for tonsil surgery. Department of Health (UK) Press Release December 14, 2001. Available through UK National Archives website at http://webarchive.nationalarchives.gov.uk/+/www.dh.gov.uk/en/Publicationsandstatistics/Pressreleases/DH_4011629 (accessed 13 Nov 2025)

17 European Commission. (2003) Directive 2003/88/EC of the European Parliament and of the Council of 4 November 2003 concerning certain aspects of the organisation of working time. Official Journal L 299, 18/11/2003. 9–19. Available at https://eur-lex.europa.eu/eli/dir/2003/88/oj/eng accessed 13 Jul 2025

18 Royal College of Surgeons of England. (2014) Taskforce report on the impact of the European Working Time Directive. Available at https://www.rcseng.ac.uk/-/media/Files/RCS/Standards-and-research/Standards-and-policy/Service-standards/Workforce/wtdtaskforcereport2014.pdf (accessed 01 August 2025)

19 Anantharachagan A, Ohja K. (2011) Working time directive and its impact on training. Obs Gyn Rep Med 21(3):91-93 doi: 10.1016/j.ogrm.2011.01.005

20 Axelrod L, Shah DJ, Jena AB (2013). The European Working Time Directive: An Uncontrolled Experiment in Medical Care and Education. JAMA 309(5): 447-8. doi:10.1001/jama.2012.148065

21 Alkatout I, Mechler U et al. (2021) The Development of Laparoscopy: an Overview. Front Surg 8:79942 doi: 10.3389/fsurg.2021.799442

22 Patil M Jr, Gharde P, Reddy K, Nayak K. (2024) Comparative Analysis of Laparoscopic Versus Open Procedures in Specific General Surgical Interventions. Cureus 16(2):e54433. doi: 10.7759/cureus.54433

23 Burgdorf SK, Rosenberg J (2012) Short Hospital Stay after Laparoscopic Colorectal Surgery without Fast Track. Minim Invasive Surg 2012:260273. doi: 10.1155/2012/260273

24 Schildberg C, Weber U, König V, Linnartz M, Heisler S, Hafkesbrink J, Fricke M, Mantke R. (2025) Laparoscopic appendectomy as the gold standard: What role remains for open surgery, conversion, and disease severity? An analysis of 32,000 cases with appendicitis in Germany. World J Emerg Surg 20(1):53

25 Patil M, Gharde P, Reddy K, Nayak K. (2024) Comparative Analysis of Laparoscopic Versus Open Procedures in Specific General Surgical Interventions. Cureus 16(2):e54433. doi: 10.7759/cureus.54433

26 Cheung FY, Bretherton K, Wong N. (2022) SP7.7 Open Appendicectomy Confidence and Experience Study (OACES): a multicentre survey of UK non-consultant grade surgeons BJS 105(9):znac247.080. doi: 10.1093/bjs/ znac247.080

27 Ring L, Landau R, Delgado C. (2021). The Current Role of General Anaesthesia for Cesarean Delivery. Curr Anaesthesiol Rep 11(1):18-27. doi: 10.1007/s40140-021-00437-6

28 Johnson RV Lyons GR et al. (2000) Training in obstetric general anaesthesia: a vanishing art? Anaesthesia 55(2): 179-83 doi: 10.1046/j.1365-2044.2000.055002179.x

29 Searle RD, Lyons G. (2008) Vanishing experience in training for obstetric general anaesthesia: an observational study. Int J Obstet Anaesthesiol 17(3):233-7. doi: 10.1016/j.ijoa.2008.01.007

30 Palanisamy A, Mitani AA, Tsen LC. (2011) General anaesthesia for cesarean delivery at a tertiary care hospital from 2000 to 2005: a retrospective analysis and 10-year update. Int J Obstet Anaesthesiol 20(1): 10-16. doi:10.1016/j.ijoa.2010.07.002

31 Brain AI. (1983) The Laryngeal Mask Airway – a new concept in airway management. BJA 55(8):801-5 doi: 10.1093/bja/55.8.801

32 Brimacombe JR, Berry AM, Brain AIJ. (1995). The laryngeal mask airway. Anaesthesiol Clin N Am 13(2):411-37. doi: 10.1016/S0889-8537(21)00528-9

33 Royal College of Anaesthetists. (2009) Major complications of airway management in the United Kingdom – 4th National Audit Project of The Royal College of Anaesthetists and The Difficult Airway Society. Available at

34 https://www.rcoa.ac.uk/sites/default/files/documents/2023-02/NAP4%20Full%20Report.pdf accessed 01 Aug 2025.

35 Aydin ZIA, Avci II, Avci IE. (2025). Factors associated with placement failure of second-

36 generation laryngeal mask airway: a retrospective clinical study. Acta Medica Nicomedia 8(1):1-10. doi: 10.53446/actamednicomedia.1486989

37 Alexander R, Hodgson P, Lomax D, Mullen C. (1993) A comparison of the laryngeal mask airway and Guedel airway, bag and facemask for manual ventilation following formal training. Anaesthesia 48(3):231-4. doi: 10.1011/j.1365-2044.1993.tb06909.x

38 Lasik J, Klosiewicz T, Puslecki M. (2025) Quality of bag-valve-mask ventilation in adults: a comparison of paramedic and nurse performance. Disaster Emerg Med J 10(2):85-93. doi: 10.5603/demj.104569

39 Perlas A, Brull R, Chan VW. (2008) Ultrasound guidance improves the success of peripheral nerve blocks: a meta-analysis. Reg Anaesth Pain Med 33(3):213–218.

40 Barrington MJ, Kluger R. (2013) Ultrasound guidance reduces the risk of local anaesthetic systemic toxicity following peripheral nerve blockade. Reg Anaesth Pain Med 38(4):289–297

41 Abrahams MS, Aziz MF, Fu RF, Horn JL. (2009) Ultrasound guidance compared with nerve stimulation for peripheral nerve block: a systematic review and meta-analysis of randomized controlled trials. Br J Anaesth 102(3):408–417.

42 Gadsden JC. (2021) The role of peripheral nerve stimulation in the era of ultrasound-guided regional anaesthesia. Anaesthesia 76(1):65-73 doi:10.1111/anae.15257

43 Ghosh SM, Madjdpour C, Chin KJ. (2016). Ultrasound-guided lumbar central neuraxial block. BJA Ed 16(7):2013-220 DOI: 10.1093/bjaed/mkv048

44 Karmakar MK, Chin KJ (2023) Spinal Sonography and Applications of Ultrasound for Central Neuraxial Blocks. Available through the NYSORA website https://www.nysora.com/techniques/neuraxial-and-perineuraxial-techniques/spinal-sonography-and-applications-of-ultrasound-for-central-neuraxial-blocks/ Accessed 03 Aug 2025

45 Bainbridge L. (1983) Ironies of Automation. Automatica 19(6):775-779 doi:10.1016/0005-1098(83)90046-8

46 McKendrick M, Yang S, McLeod (2021) The use of artificial intelligence and robotics in regional anaesthesia. Anaesthesia 76(1):171-81 doi: 10.1111/anae.15274

47 Lonsdale H, Burns ML, Epstein RH, Hofer IS, Tighe PJ, Gálvez Delgado JA, Kor DJ, MacKay EJ, Rashidi P, Wanderer JP, McCormick PJ. (2025) Strengthening Discovery and Application of Artificial Intelligence in Anaesthesiology: A Report from the Anaesthesia Research Council. Anesth Analg 140(4):920-930

48 Bellini V, Rafano Carnà E, Russo M, Di Vincenzo F, Berghenti M, Baciarello M, Bignami E. Artificial intelligence and anaesthesia: a narrative review. Ann Transl Med. 2022 May;10(9):528.

49 Dundaru-Bandi D, Antel R, Ingelmo P. Advances in pediatric perioperative care using artificial intelligence. Curr Opin Anesthesiol. 2024 Jun 1;37(3):251-258.

50 Lonsdale H, Burns ML, Epstein RH, Hofer IS, Tighe PJ, Gálvez Delgado JA, Kor DJ, MacKay EJ, Rashidi P, Wanderer JP, McCormick PJ. Strengthening Discovery and Application of Artificial Intelligence in Anaesthesiology: A Report from the Anaesthesia Research Council. Anesth Analg. 2025 Apr 1;140(4):920-930.

51 Xue B, Li D, Lu C, et al. (2021) Use of Machine Learning to Develop and Evaluate Models Using Preoperative and Intraoperative Data to Identify Risks of Postoperative Complications. JAMA Netw Open 4:e212240.

52 Kim JH, Kim H, Jang JS, et al. Development and validation of a difficult laryngoscopy prediction model using machine learning of neck circumference and thyromental height. BMC Anesthesiol 2021;21:125.

53 Michard F, Futier E. (2023) Predicting intraoperative hypotension: from hope to hype and back to reality. BJA 131(2):199-201. doi: 10.1016/j.bja.2023.02.029

54 Cao Y, Wang Y, Liu H, Wu L. (2025) Artificial intelligence revolutionizing anaesthesia management: advances and prospects in intelligent anaesthesia technology. Front Med (Lausanne) 12:1571725.

55 West N, van Heusden K, Matthias G, Brodie S, Rollinson A, Petersen C, Dumont G, Ansermino, JM, Merchant R. (2018) Design and Evaluation of a Closed-Loop Anesthesia System With Robust Control and Safety System. Anesth Analg 127(4):883-894. doi: 10.1213/ANE.0000000000002663

56 Harris J, Matthews J. (2024) Artificial Intelligence: Predicting Perioperative Problems. Br J Hosp Med 85(8):1-4.

57 Bellini V, Valente M, Gaddi AV, Pelosi P, Bignami E. Artificial intelligence and telemedicine in anaesthesia: potential and problems. Minerva Anestesiol. 2022 Sep;88(9):729-734.

58 Savage M, Spence A, Turbitt L. (2024) The educational impact of technology-enhanced learning in regional anaesthesia: a scoping review. BJA 133(2) 400-415. doi: 10.1016/j.bja.2024.04.045

59 Minehart RD, Stefanski SE. (2025) Artificial Intelligence Supporting Anaesthesiology Clinical Decision-Making. Anesth Analg. 141(3)536-539.

60 Corpman D, Auyong DB, Walters D, Gray A. (2025) Using AI chatbots as clinical decision support for regional anaesthesia: is the new era of “e-Consults” ready for primetime? Reg Anesth Pain Med rapm-2025-106850.

61 Hoshima H, Miyazaki T, Mitsui Y, Omachi S, Yamauchi M, Mizuta K. (2024) Machine learning-based identification of the risk factors for postoperative nausea and vomiting in adults. PLoS One 19(8):e0308755. doi: 10.1371/journal.pone.0308755.

62 Hashimoto DA, Witkowski E, Gao L, Meireles O, Rosman G. (2020) Artificial Intelligence in Anaesthesiology: Current Techniques, Clinical Applications, and Limitations. Anaesthesiology 132(2):379-394.

63 Rahman MA, Victoros E, Ernest J, Davis R, Shanjana Y, Islam MR. (2024) Impact of Artificial Intelligence (AI) Technology in Healthcare Sector: A Critical Evaluation of Both Sides of the Coin. Clin Path 17:1-5 doi : 10.1177/2632010X2412268

64 Naik N, Hameed BMZ, Shetty DK, Swain D, Shah M, Paul R, Aggarwal K, Ibrahim S, Patil V, Smirti K, Shetty S, Rai BP, Chlosta P, Somani BK. (2022) Legal and Ethical Consideration in Artificial Intelligence in Healthcare: Who Takes Responsibility? Front Surg 9:862322. doi: 10.3389/fsurg.2022.862322

65 McKee M, Wouters OJ. (2022) The Challenges of Regulating Artificial Intelligence in Healthcare. Int J Health Policy Manag 12:7261. doi: 10.34172/ijhpm.2022.7261

66 Shimada K, Inokuchi R, Ohigashi T, Iwagami M, Tanaka M, Gosho M, Tamiya N. (2024) Artificial intelligence-assisted interventions for perioperative anaesthetic management: a systematic review and meta-analysis. BMC Anesthesiol 24(1):306.

67 Duran HT, Kingeter M, Reale C, Weinger MB, Salwei ME. Decision-making in anaesthesiology: Will artificial intelligence make intraoperative care safer? Curr Opin Anaesthesiol. 36(6):691-697.

68 Ericsson KA. (2008) Deliberate practice and acquisition of expert performance: a general overview. Acad Emerg Med 15(11):988-94. doi: 10.1111/j.1553-2712.2008.00227.x.

69 Natali C, Marconi L, Duran LDD, Cabitza F. (2025) AI-induced Deskilling in Medicine: A Mixed-Method Review and Research Agenda for Healthcare and Beyond. Artif Intel Rev 58:356. doi: 10.1007/s10462-025-11352-1

70 Gerlich M. (2025) AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking. Societies 15, 6. doi: 10.3390/soc15010006

71 Kurzweil R. (2006). The singularity is near : when humans transcend biology. New York:Penguin.

72 Klein G (2019). The Second Singularity: Human expertise and the rise of AI. Psychology Today Available at https://www.psychologytoday.com/au/blog/seeing-what-others-dont/201912/the-second-singularity (accessed 13 Nov 2025)

73 Hough J, Culley N, Erganian C, Alahdab F. (2025) Potential risks of GenAI on medical education. BMJ Evidence Based Med 30(6):406-408 doi:10.1136/bmjebm-2025-114339

74 Peltzman S. The Effects of Automobile Safety Regulation. J Pol Econ 1975; 83: 677–725.

75 Rudin-Brown CJ. (2013) Behavioural Adaptation and Road Safety: Theory, Evidence and Action. Boca Raton, Florida: Taylor & Francis Group, LLC

76 Prasad V, Jena AB. (2014) The Peltzman effect and compensatory markers in medicine. Healthcare (Amst) 2(3): 170-2

77 Amalberti R, Vincent C et al. Violations and migrations in healthcare: a framework for understanding and management. Qual Saf Health Care 2006 Dec; 15(Suppl 1): i66–i71.

78 Hollnagel E, Woods DD. (1983) Cognitive Systems Engineering: New Wine in New Bottles. Int J Man-Machine Studies 18(6):583-600. doi:10.1016/S0020-7373(83)80034-0 Available at https://erikhollnagel.com/onewebmedia/CSE_NWINB.pdf (accessed 07Nov 2025).

79 Ham D-H. (2021) Safety-II and Resilience Engineering in a Nutshell: An Introductory Guide to Their Concepts and Methods. Safety Health at Work 12(1):10-19 doi: 10.1016/j.shaw.2020.11.004

80 Effken JD (2002). Different lenses, improved outcomes: a new approach to the analysis and design of healthcare information systems. Int J Healthcare Informatics 6(1): 59-74. doi: 10.1016/S1386-5056(02)00003-5

81 McChrystal S, Collins T, Silverman D, Fussel C. (2015) Team of Teams: New Rules of Engagement for a Complex World. Penguin pubs. passim

82 Zarazur BL, Stahl CC, Greenberg JA, Savage SA, Minter RM. (2020) Blueprint for Restructuring a Department of Surgery in Concert With the Health Care System During a Pandemic: The University of Wisconsin Experience. JAMA Surgery 155(7):628-635 doi:10.1001/jamasurg.2020.1386

83 Carayon P, Schoofs Hundt A, Karsh B-T, Gurses AP, Alvarado CJ, Smith M, Flatley Brennan P. (2006) Work system design for patient safety: the SEIPS model. Qual Saf Health Care 15(Suppl I):i50–i58. doi: 10.1136/qshc.2005.015842

84 Carayon P, Wooldridge A, Hoonakker P, Schoofs Hundt A, Kelly MM. (2020) SEIPS 3.0: Human-Centred Design of the Patient Journey for Patient Safety. Appl Ergon 84: 103033. doi:10.1016/j.apergo.2019.103033.

85 Sujan M, Pool R, Salmon M. (2022) Eight human factors and ergonomics principles for healthcare artificial intelligence. BMJ Health Care Inform 29(1):e100516. doi: 10.1136/bmjhci-2021-100516

86 Ward M, Sujan M, Pool R, Preston K, Huang H, Carrington A, Chozos N. (2025) Integrating Human-Centred AI into Clinical Practice. Available at https://ergonomics.org.uk/resource/integrating-human-centred-ai-in-clinical-practice.html (accessed 13 Nov 2025)

87 Abdulnour RE, Gin B, Boscardin CK (2025) Educational Strategies for Clinical Supervision of Artificial Intelligence Use. NEJM 393(8):786-97 doi: 10.1056/NEJ Mra2503232